云原生|对象存储|minio分布式集群的搭建和初步使用(可用于生产)

前言:

minio作为轻量级的对象存储服务安装还是比较简单的,但分布式集群可以大大提高存储的安全性,可靠性。分布式集群是在单实例的基础上扩展而来的

minio的分布式集群有如下要求:

- 所有运行分布式 MinIO 的节点需要具有相同的访问密钥和秘密密钥才能连接。建议在执行 MINIO 服务器命令之前,将访问密钥作为环境变量,MINIO access key 和 MINIO secret key 导出到所有节点上 。

- Minio 创建4到16个驱动器的擦除编码集。

- Minio 选择最大的 EC 集大小,该集大小除以给定的驱动器总数。 例如,8个驱动器将用作一个大小为8的 EC 集,而不是两个大小为4的 EC 集 。

- 建议所有运行分布式 MinIO 设置的节点都是同构的,即相同的操作系统、相同数量的磁盘和相同的网络互连 。

- 运行分布式 MinIO 实例的服务器时间差不应超过15分钟。

单实例部署可以见我原来写的博文:Linux|minio对象存储服务的部署和初步使用总结_linux部署minio-CSDN博客

首先,minio的安装部署方式很多,可以使用docker,二进制,rpm?亦或者集成部署在kubernetes内,综合各种部署方式的优劣,本文选择rpm安装部署,该方式其实和二进制没什么太大区别,但足够简单,省去了很多麻烦。

其次就是minio的drive问题,minio要求ta使用的存储空间也就是drive必须是一个空的,单独挂载的磁盘,那么,有时候根据我们的使用目的,比如,我仅仅需要velero这个工具通过minio来存储kubernetes的备份文件,那么,对存储空间的要求就没有那么高了,因此,本文采用的是虚拟磁盘的挂载技术

最后,就是minio的console-address的问题,每一个minio单实例都集成有console,也就是web控制台,该控制台配置文件里不显式写也会启动,但端口是随机的,如果你需要更安全,那么,建议不写console,让ta自动分配随机端口,需要使用console的时候,通过日志来查询后使用

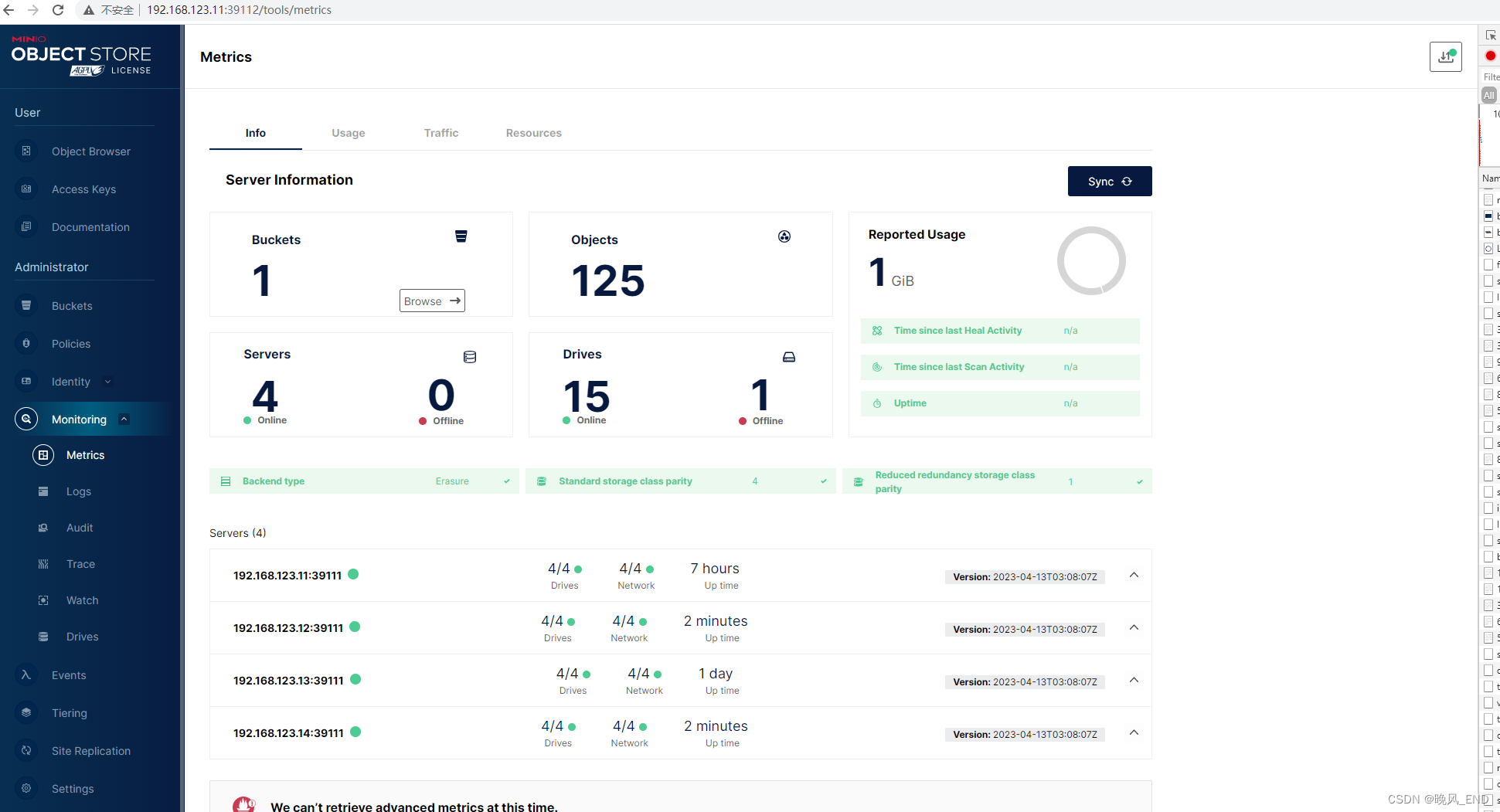

本次实践是使用的VMware虚拟机,VMware虚拟机服务器总计四台,IP分别是192.168.123.11,192.168.123.12,192.168.123.13,192.168.123.14,操作系统是centos-7.7,minio的版本为minio-20230413030807.0.0.x86_64

一,

minio集群的环境初始化

这些都是老生常谈的问题,就不在此详细说明了,防火墙关闭,selinux关闭,时间服务器,本集群无需内部域名映射

内核优化(每个节点都执行):

cat > /etc/sysctl.d/minio.conf <<EOF

# maximum number of open files/file descriptors

fs.file-max = 4194303

# use as little swap space as possible

vm.swappiness = 1

# prioritize application RAM against disk/swap cache

vm.vfs_cache_pressure = 50

# minimum free memory

vm.min_free_kbytes = 1000000

# follow mellanox best practices https://community.mellanox.com/s/article/linux-sysctl-tuning

# the following changes are recommended for improving IPv4 traffic performance by Mellanox

# disable the TCP timestamps option for better CPU utilization

net.ipv4.tcp_timestamps = 0

# enable the TCP selective acks option for better throughput

net.ipv4.tcp_sack = 1

# increase the maximum length of processor input queues

net.core.netdev_max_backlog = 250000

# increase the TCP maximum and default buffer sizes using setsockopt()

net.core.rmem_max = 4194304

net.core.wmem_max = 4194304

net.core.rmem_default = 4194304

net.core.wmem_default = 4194304

net.core.optmem_max = 4194304

# increase memory thresholds to prevent packet dropping:

net.ipv4.tcp_rmem = 4096 87380 4194304

net.ipv4.tcp_wmem = 4096 65536 4194304

# enable low latency mode for TCP:

net.ipv4.tcp_low_latency = 1

# the following variable is used to tell the kernel how much of the socket buffer

# space should be used for TCP window size, and how much to save for an application

# buffer. A value of 1 means the socket buffer will be divided evenly between.

# TCP windows size and application.

net.ipv4.tcp_adv_win_scale = 1

# maximum number of incoming connections

net.core.somaxconn = 65535

# maximum number of packets queued

net.core.netdev_max_backlog = 10000

# queue length of completely established sockets waiting for accept

net.ipv4.tcp_max_syn_backlog = 4096

# time to wait (seconds) for FIN packet

net.ipv4.tcp_fin_timeout = 15

# disable icmp send redirects

net.ipv4.conf.all.send_redirects = 0

# disable icmp accept redirect

net.ipv4.conf.all.accept_redirects = 0

# drop packets with LSR or SSR

net.ipv4.conf.all.accept_source_route = 0

# MTU discovery, only enable when ICMP blackhole detected

net.ipv4.tcp_mtu_probing = 1

EOF

sysctl -p /etc/sysctl.d/minio.conf

# `Transparent Hugepage Support`*: This is a Linux kernel feature intended to improve

# performance by making more efficient use of processor’s memory-mapping hardware.

# But this may cause https://blogs.oracle.com/linux/performance-issues-with-transparent-huge-pages-thp

# for non-optimized applications. As most Linux distributions set it to `enabled=always` by default,

# we recommend changing this to `enabled=madvise`. This will allow applications optimized

# for transparent hugepages to obtain the performance benefits, while preventing the

# associated problems otherwise. Also, set `transparent_hugepage=madvise` on your kernel

# command line (e.g. in /etc/default/grub) to persistently set this value.

echo "Enabling THP madvise"

echo madvise | sudo tee /sys/kernel/mm/transparent_hugepage/enabled二,

minio集群的drive创建

每个节点都执行,这里是每个虚拟磁盘1G,分别挂载到/data1目录下的,如果是生产环境,建议?vim /etc/fstab?固化挂载

使用的普通用户minio-user?设置不可登陆,并赋予相关目录该用户的属组

mkdir -p /data1/minio{1..4}

groupadd -r minio-user

useradd -M -r -g minio-user minio-user

chown -Rf minio-user. /data1/

dd if=/dev/zero of=/media/testfile1 bs=200M count=5

dd if=/dev/zero of=/media/testfile2 bs=200M count=5

dd if=/dev/zero of=/media/testfile3 bs=200M count=5

dd if=/dev/zero of=/media/testfile4 bs=200M count=5

mkfs.xfs /media/testfile1

mkfs.xfs /media/testfile2

mkfs.xfs /media/testfile3

mkfs.xfs /media/testfile4

mount -t xfs /media/testfile1 /data1/minio1/

mount -t xfs /media/testfile2 /data1/minio2/

mount -t xfs /media/testfile3 /data1/minio3/

mount -t xfs /media/testfile4 /data1/minio4/固化挂载:?

tail -f /etc/fstab

/media/testfile1 /data1/minio1/ xfs defaults 0 0

/media/testfile2 /data1/minio2/ xfs defaults 0 0

/media/testfile3 /data1/minio3/ xfs defaults 0 0

/media/testfile4 /data1/minio4/ xfs defaults 0 0 [root@node1 ~]# df -ah

Filesystem Size Used Avail Use% Mounted on

sysfs 0 0 0 - /sys

proc 0 0 0 - /proc

devtmpfs 2.0G 0 2.0G 0% /dev

securityfs 0 0 0 - /sys/kernel/security

tmpfs 2.0G 52K 2.0G 1% /dev/shm

devpts 0 0 0 - /dev/pts

tmpfs 2.0G 68M 1.9G 4% /run

tmpfs 2.0G 0 2.0G 0% /sys/fs/cgroup

。。。。略略略。。。

/dev/loop0 997M 99M 899M 10% /data1/minio1

/dev/loop1 997M 99M 899M 10% /data1/minio2

/dev/loop2 997M 99M 899M 10% /data1/minio3

/dev/loop3 997M 99M 899M 10% /data1/minio4

三,

正式安装部署minio

每个节点都执行:

rpm -ivh minio-20230413030807.0.0.x86_64.rpm安装完毕后,查看minio的启动脚本:

[root@node1 ~]# cat /etc/systemd/system/minio.service

[Unit]

Description=MinIO

Documentation=https://docs.min.io

Wants=network-online.target

After=network-online.target

AssertFileIsExecutable=/usr/local/bin/minio

[Service]

WorkingDirectory=/usr/local

User=minio-user

Group=minio-user

ProtectProc=invisible

EnvironmentFile=-/etc/default/minio

ExecStartPre=/bin/bash -c "if [ -z \"${MINIO_VOLUMES}\" ]; then echo \"Variable MINIO_VOLUMES not set in /etc/default/minio\"; exit 1; fi"

ExecStart=/usr/local/bin/minio server $MINIO_OPTS $MINIO_VOLUMES

# Let systemd restart this service always

Restart=always

# Specifies the maximum file descriptor number that can be opened by this process

LimitNOFILE=1048576

# Specifies the maximum number of threads this process can create

TasksMax=infinity

# Disable timeout logic and wait until process is stopped

TimeoutStopSec=infinity

SendSIGKILL=no

[Install]

WantedBy=multi-user.target

# Built for ${project.name}-${project.version} (${project.name})

观察该脚本,发现用户这方面我们已经设置好了,前面创建了用户,现在是两个关键变量$MINIO_OPTS $MINIO_VOLUMES?以及存放变量的文件/etc/default/minio

可以看到,刚安装完毕是没有这个文件的,需要我们自己创建(每个节点都一样的):

cat >/etc/default/minio <<EOF

# Set the hosts and volumes MinIO uses at startup

# The command uses MinIO expansion notation {x...y} to denote a

# sequential series.

#

# The following example covers four MinIO hosts

# with 4 drives each at the specified hostname and drive locations.

# The command includes the port that each MinIO server listens on

# (default 9000)

## 这块是文件磁盘的位置 因为我们是集群节点是163-166 这边是一种池化写法

MINIO_VOLUMES="http://192.168.123.{11...14}:39111/data1/minio{1...4}"

# Set all MinIO server options

#

# The following explicitly sets the MinIO Console listen address to

# port 9001 on all network interfaces. The default behavior is dynamic

# port selection.

## minio-console的地址 就是web界面控制台

MINIO_OPTS="--address :39111 --console-address :39112"

# Set the root username. This user has unrestricted permissions to

# perform S3 and administrative API operations on any resource in the

# deployment.

#

# Defer to your organizations requirements for superadmin user name.

# console的登陆账号

MINIO_ROOT_USER=minioadmin

# Set the root password

#

# Use a long, random, unique string that meets your organizations

# requirements for passwords.

# console的登陆密码

MINIO_ROOT_PASSWORD=minioadmin

EOF该配置文件的说明:

MINIO_VOLUMES="http://192.168.123.{11...14}:39111/data1/minio{1...4}"

可以拆开写成 MINIO_VOLUMES="http://192.168.123.11:39111/data1/minio{1...4} http://192.168.123.12:39111/data1/minio{1...4} http://192.168.123.13:39111/data1/minio{1...4}

http://192.168.123.14:39111/data1/minio{1...4}"

因为,四个VMware服务器IP是连续的,所以可以写成上面的简略形式,下面的拆开写法也是OK的MINIO_OPTS="--address :39111 --console-address :39112"

可以改成MINIO_OPTS="--address :39111 " ,修改为这种形式的时候,minio启动的时候console就会使用随机端口,minio server的服务端口是39111,默认是9000,建议修改端口以提高服务的安全性MINIO_ROOT_USER=minioadmin

MINIO_ROOT_PASSWORD=minioadmin

这两个是minio服务的web登录账号密码,实际生产中建议设置密码复杂一些

最后还需要给该文件赋权:

chown -Rf minio-user. /etc/default/minio四,

启动minio服务

systemctl enable minio

systemctl start minio

systemctl status minio最后一个命令输出如下,表示服务正常:

Dec 28 23:45:57 node1 minio[23188]: MinIO Object Storage Server

Dec 28 23:45:57 node1 minio[23188]: Copyright: 2015-2023 MinIO, Inc.

Dec 28 23:45:57 node1 minio[23188]: License: GNU AGPLv3 <https://www.gnu.org/licenses/agpl-3.0.html>

Dec 28 23:45:57 node1 minio[23188]: Version: RELEASE.2023-04-13T03-08-07Z (go1.20.3 linux/amd64)

Dec 28 23:45:57 node1 minio[23188]: Status: 16 Online, 0 Offline.

Dec 28 23:45:57 node1 minio[23188]: API: http://10.96.24.248:39111 http://192.168.123.11:39111 http://169.254.25.10:39111 http://10.96.0.3:39111 http://10.96.0.1:39111 http://172.17.0.1:39111 http://10.244.26.0:39111 http://127.0.0.1:39111

Dec 28 23:45:57 node1 minio[23188]: Console: http://10.96.24.248:43585 http://192.168.123.11:43585 http://169.254.25.10:43585 http://10.96.0.3:43585 http://10.96.0.1:43585 http://172.17.0.1:43585 http://10.244.26.0:43585 http://127.0.0.1:43585

Dec 28 23:45:57 node1 minio[23188]: Documentation: https://min.io/docs/minio/linux/index.html

Dec 28 23:45:58 node1 minio[23188]: You are running an older version of MinIO released 8 months ago

Dec 28 23:45:58 node1 minio[23188]: Update: Run `mc admin update`关键字段是Dec 28 23:45:57 node1 minio[23188]: Status: ? ? ? ? 16 Online, 0 Offline.?这个表示drive全部被发现

最下面有一个警告,说的是minio版本有点低,可以忽略,You are running an older version of MinIO released 8 months ago

还有一个警告找不到了,不过也是无所谓,那个警告大体意思是操作系统内核太低,建议内核版本4以上,minio的性能会更好一点。

目前的内核版本:

[root@node4 ~]# uname -a

Linux node4 3.10.0-1062.el7.x86_64 #1 SMP Wed Aug 7 18:08:02 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

console也就是web管理界面的端口:

Console: http://10.96.24.248:35382 http://192.168.123.14:35382 http://169.254.25.10:35382 http://10.96.0.3:35382 http://10.96.0.1:35382 http://172.17.0.1:35382 http://10.244.41.0:35382 http://127.0.0.1:35382很明显是??http://192.168.123.14:35382? ?其它的IP可能由于是minio和kubernetes运行在一起了吧,忽略掉就可以了

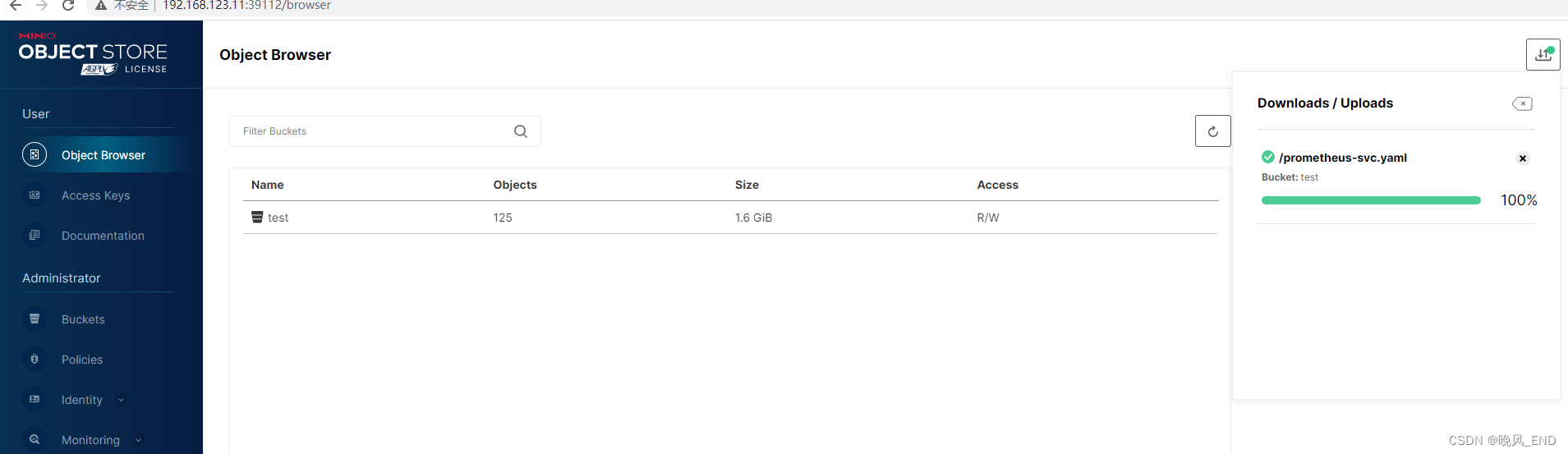

登录web管理界面:

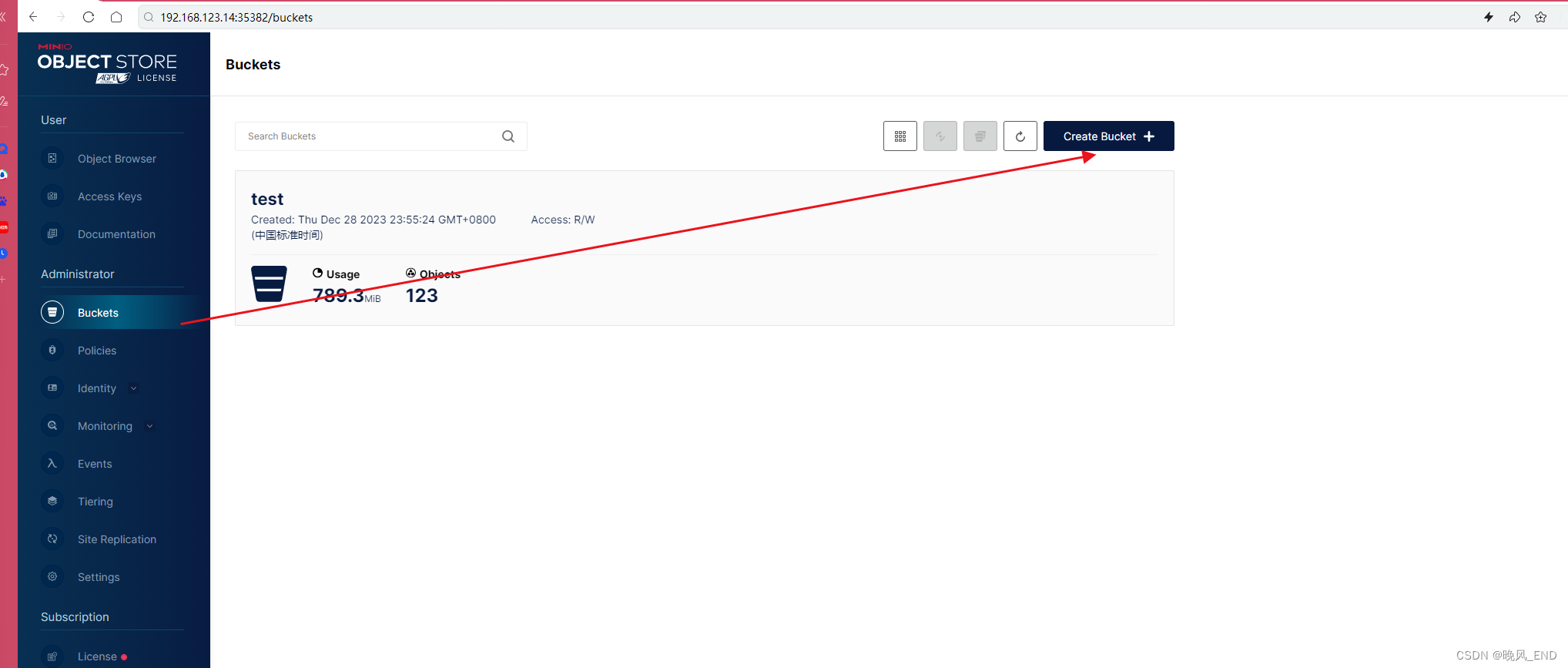

?创建桶(我已经创建过了,叫test)

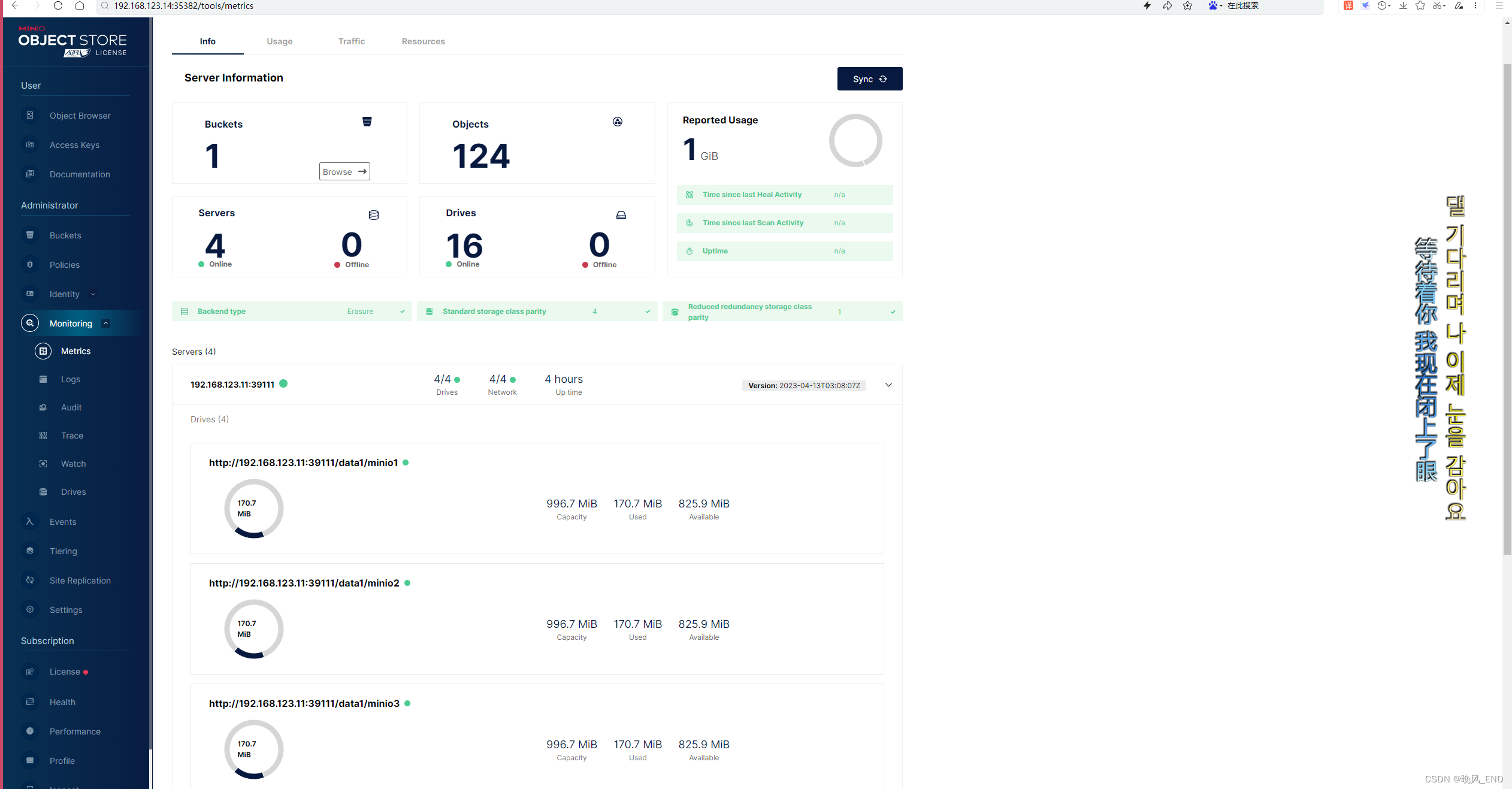

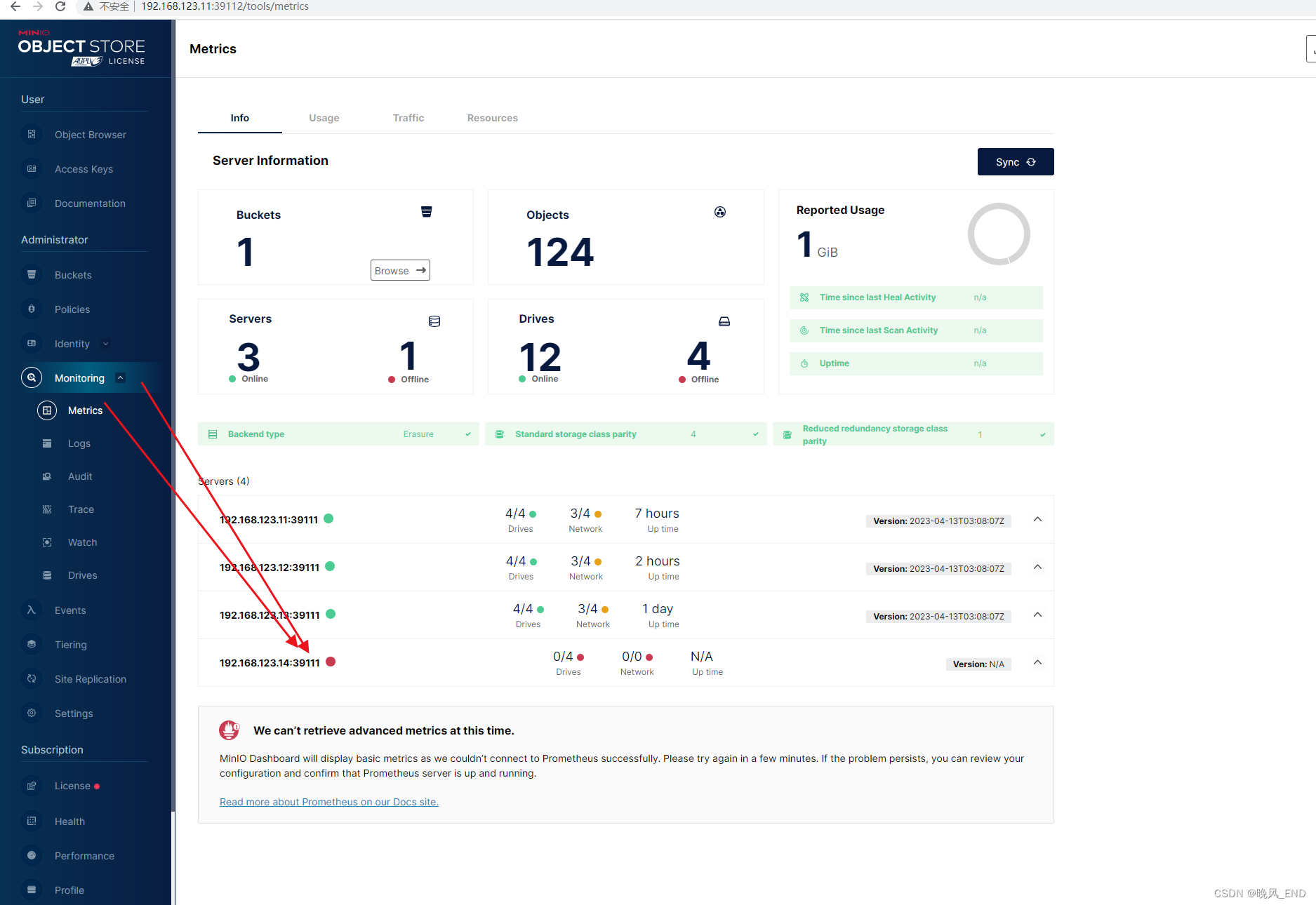

monitoring?可以看到有使用多少磁盘?

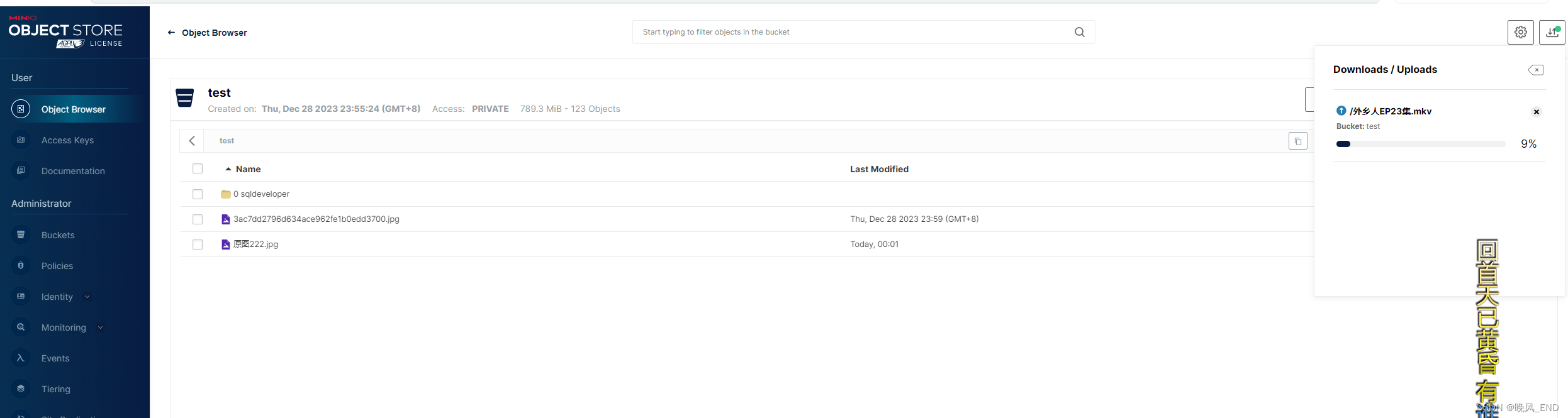

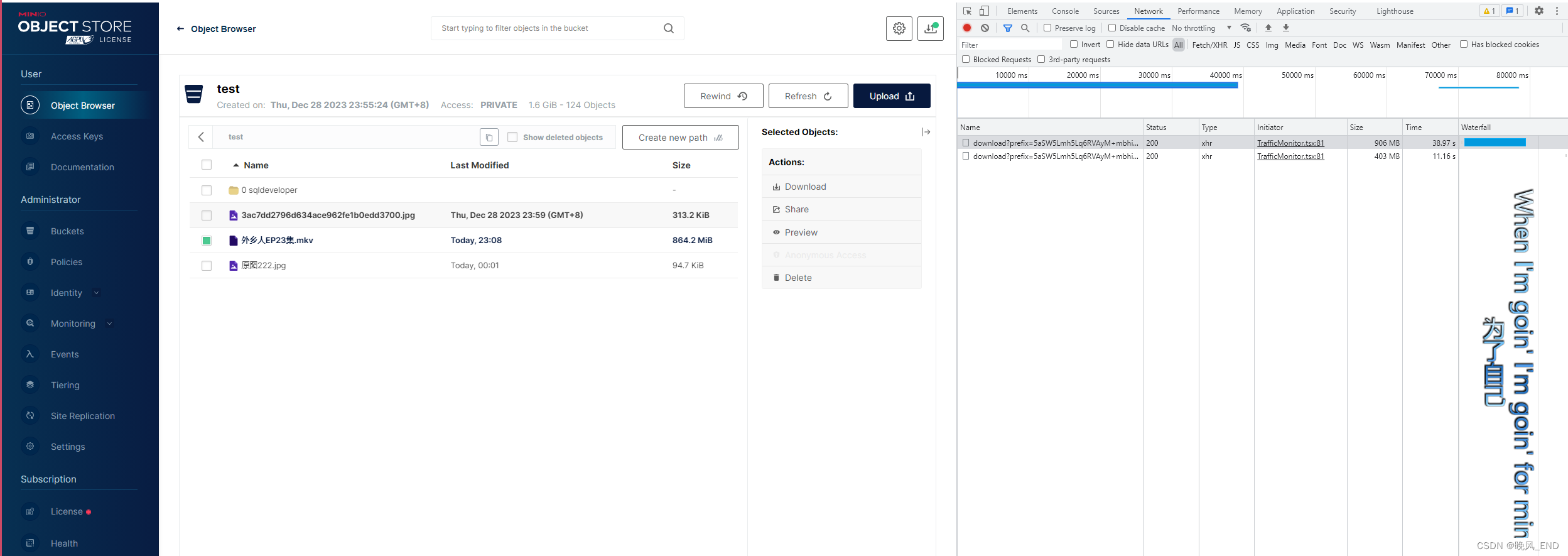

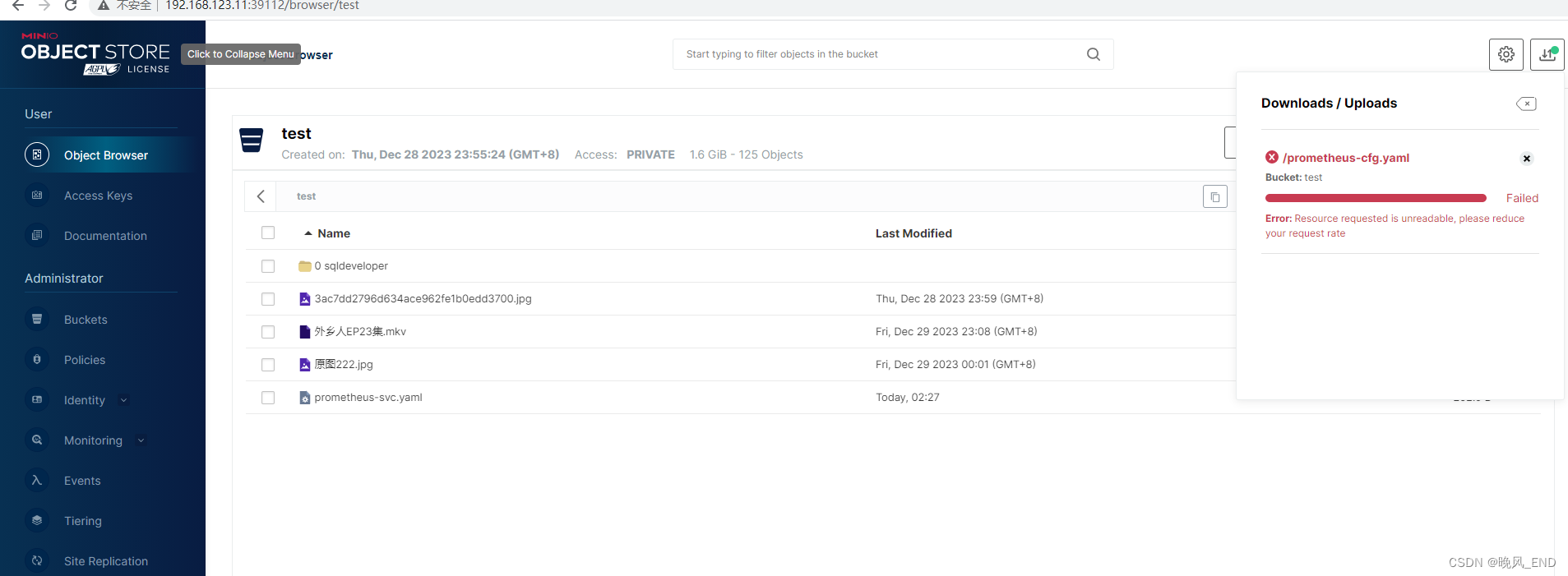

?传一个大文件上去:

?传一个大文件上去:

传输速度还是比较快的,比ftp什么的快多了

?在看看磁盘使用空间:

可以看到,一个800多M的文件被minio打散了分布存储到了各个节点上了

下载文件:

可以看到,下载是先从minio?server上各个节点收集文件在下载的

五,

测试高可用

192.168.123.14节点关机

仍然可以正常下载?上传文件:

?

?

查看服务状态:

[root@node1 ~]# systemctl status minio

● minio.service - MinIO

Loaded: loaded (/etc/systemd/system/minio.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2023-12-29 18:53:31 CST; 7h ago

Docs: https://docs.min.io

Process: 15886 ExecStartPre=/bin/bash -c if [ -z "${MINIO_VOLUMES}" ]; then echo "Variable MINIO_VOLUMES not set in /etc/default/minio"; exit 1; fi (code=exited, status=0/SUCCESS)

Main PID: 15889 (minio)

Tasks: 15

Memory: 144.9M

CGroup: /system.slice/minio.service

└─15889 /usr/local/bin/minio server --address :39111 --console-address :39112 http://192.168.123.{11...14}:39111/data1/minio{1...4}

Dec 30 01:59:23 node1 minio[15889]: API: SYSTEM()

Dec 30 01:59:23 node1 minio[15889]: Time: 17:59:23 UTC 12/29/2023

Dec 30 01:59:23 node1 minio[15889]: DeploymentID: 5e75dcd3-d3de-4a08-bdee-3197016ceded

Dec 30 01:59:23 node1 minio[15889]: Error: Marking 192.168.123.14:39111 offline temporarily; caused by Post "http://192.168.123.14:39111/minio/peer/v30/log": dial tcp 192.168.123.14:39111: connect: no route to host (*fmt.wrapError)

Dec 30 01:59:23 node1 minio[15889]: 6: internal/logger/logonce.go:118:logger.(*logOnceType).logOnceIf()

Dec 30 01:59:23 node1 minio[15889]: 5: internal/logger/logonce.go:149:logger.LogOnceIf()

Dec 30 01:59:23 node1 minio[15889]: 4: internal/rest/client.go:259:rest.(*Client).Call()

Dec 30 01:59:23 node1 minio[15889]: 3: cmd/peer-rest-client.go:68:cmd.(*peerRESTClient).callWithContext()

Dec 30 01:59:23 node1 minio[15889]: 2: cmd/peer-rest-client.go:710:cmd.(*peerRESTClient).doConsoleLog()

Dec 30 01:59:23 node1 minio[15889]: 1: cmd/peer-rest-client.go:734:cmd.(*peerRESTClient).ConsoleLog.func1()

?在关闭一个节点,上传不了了,也下载不了任何文件了,只能看看:

?两个关闭的节点在开启,很快的啊,啪? 的一下?就恢复了:

下载和上传功能完全恢复,很快的,就不演示了,?在看服务状态就没什么报错了:

[root@node1 ~]# systemctl status minio

● minio.service - MinIO

Loaded: loaded (/etc/systemd/system/minio.service; enabled; vendor preset: disabled)

Active: active (running) since Sat 2023-12-30 02:37:20 CST; 1s ago

Docs: https://docs.min.io

Process: 64997 ExecStartPre=/bin/bash -c if [ -z "${MINIO_VOLUMES}" ]; then echo "Variable MINIO_VOLUMES not set in /etc/default/minio"; exit 1; fi (code=exited, status=0/SUCCESS)

Main PID: 64999 (minio)

Tasks: 11

Memory: 143.1M

CGroup: /system.slice/minio.service

└─64999 /usr/local/bin/minio server --address :39111 --console-address :39112 http://192.168.123.{11...14}:39111/data1/minio{1...4}

Dec 30 02:37:20 node1 minio[64999]: Copyright: 2015-2023 MinIO, Inc.

Dec 30 02:37:20 node1 minio[64999]: License: GNU AGPLv3 <https://www.gnu.org/licenses/agpl-3.0.html>

Dec 30 02:37:20 node1 minio[64999]: Version: RELEASE.2023-04-13T03-08-07Z (go1.20.3 linux/amd64)

Dec 30 02:37:20 node1 minio[64999]: Use `mc admin info` to look for latest server/drive info

Dec 30 02:37:20 node1 minio[64999]: Status: 15 Online, 1 Offline.

Dec 30 02:37:20 node1 minio[64999]: API: http://10.96.24.248:39111 http://192.168.123.11:39111 http://169.254.25.10:39111 http://10.96.0.3:39111 http://10.96.0.1:39111 http://172.17.0.1:39111 http://10.244.26.0:39111 http://127.0.0.1:39111

Dec 30 02:37:20 node1 minio[64999]: Console: http://10.96.24.248:39112 http://192.168.123.11:39112 http://169.254.25.10:39112 http://10.96.0.3:39112 http://10.96.0.1:39112 http://172.17.0.1:39112 http://10.244.26.0:39112 http://127.0.0.1:39112

Dec 30 02:37:20 node1 minio[64999]: Documentation: https://min.io/docs/minio/linux/index.html

Dec 30 02:37:21 node1 minio[64999]: You are running an older version of MinIO released 8 months ago

总结:

minio可以作为网盘使用,但一般是与其它组件联合使用,例如,kubernetes集群内部使用,kafka,redis等等,根据实际需求持久化数据,minio自带有比较完整的权限系统,安全性还是有一定保障的。

本例中,1个节点关闭或者损坏不影响minio集群的使用,2个节点关闭或者损坏将只能读存储,不可以上传或者下载了,也就是只能看看

minio是去中心化的,也就是没有什么主从之分的,因此可以使用nginx做负载均衡:

upstream minio_servers {

server 192.168.123.11:39111;

server 192.168.123.12:39111;

server 192.168.123.13:39111;

server 192.168.123.14:39111;

}

server {

listen 80;

server_name 192.168.123.11;

# To allow special characters in headers

ignore_invalid_headers off;

# Allow any size file to be uploaded.

# Set to a value such as 1000m; to restrict file size to a specific value

client_max_body_size 0;

# To disable buffering

proxy_buffering off;

location / {

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_set_header Host $http_host;

proxy_connect_timeout 300;

# Default is HTTP/1, keepalive is only enabled in HTTP/1.1

proxy_http_version 1.1;

proxy_set_header Connection "";

chunked_transfer_encoding off;

proxy_pass http://minio_servers; # If you are using docker-compose this would be the hostname i.e. minio

# Health Check endpoint might go here. See https://www.nginx.com/resources/wiki/modules/healthcheck/

# /minio/health/live;

}

access_log /var/log/nginx/minio_access.log custom_sls_log;

error_log /var/log/nginx/minio.error.log;

}当然了,如果console显式的配置了端口并且是统一的端口,那么,也可以放nginx里负载均衡,和上面基本一样的配置,就不演示了

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!