DL Homework 10

习题6-1P?推导RNN反向传播算法BPTT.

习题6-2?推导公式(6.40)和公式(6.41)中的梯度

习题6-3?当使用公式(6.50)作为循环神经网络的状态更新公式时, 分析其可能存在梯度爆炸的原因并给出解决方法.

? ? ? ? ?当然,因为我数学比较菜,我看了好半天还是没看懂怎么库库推过来的,等我慢慢研究,这个博客会定时更新的,哭死

????????但是可能存在梯度爆炸的原因还是比较明确的:RNN发生梯度消失和梯度爆炸的原因如图所示,将公式改为上式后当γ<1时,t-k趋近于无穷时,γ不会趋近于零,解决了梯度消失问题,但是梯度爆炸仍然存在。当γ>1时,随着传播路径的增加,γ趋近于无穷,产生梯度爆炸。

????????如果时刻t的输出yt依赖于时刻k的输入xk,当间隔t-k比较大时,简单神经网络很难建模这种长距离的依赖关系, 称为长程依赖问题(Long-Term ependencies Problem)

????????由于梯度爆炸或消失问题,实际上只能学习到短周期的依赖关系。

? ? ? ? 至于改进方案老师给出了两种,一种是较为直接的修改选取合适参数,同时使用非饱和激活函数,尽量使得 需要足够的人工调参经验,限制了模型的广泛应用.

? ? ? ? 另一种则是比较有效的改进模型

- 权重衰减 通过给参数增加L1或L2范数的正则化项来限制参数的取值范围,从而使得 γ ≤ 1.

- 梯度截断 当梯度的模大于一定阈值时,就将它截断成为一个较小的数

习题6-2P?设计简单RNN模型,分别用Numpy、Pytorch实现反向传播算子,并代入数值测试.

1.反向求导的函数

import numpy as np

import torch.nn

# GRADED FUNCTION: rnn_cell_forward

def softmax(a):

exp_a = np.exp(a)

sum_exp_a = np.sum(exp_a)

y = exp_a / sum_exp_a

return y

def rnn_cell_forward(xt, a_prev, parameters):

Wax = parameters["Wax"]

Waa = parameters["Waa"]

Wya = parameters["Wya"]

ba = parameters["ba"]

by = parameters["by"]

a_next = np.tanh(np.dot(Wax, xt) + np.dot(Waa, a_prev) + ba)

yt_pred = softmax(np.dot(Wya, a_next) + by)

cache = (a_next, a_prev, xt, parameters)

return a_next, yt_pred, cache

def rnn_cell_backward(da_next, cache):

(a_next, a_prev, xt, parameters) = cache

Wax = parameters["Wax"]

Waa = parameters["Waa"]

Wya = parameters["Wya"]

ba = parameters["ba"]

by = parameters["by"]

dtanh = (1 - a_next * a_next) * da_next

dxt = np.dot(Wax.T, dtanh)

dWax = np.dot(dtanh, xt.T)

da_prev = np.dot(Waa.T, dtanh)

dWaa = np.dot(dtanh, a_prev.T)

dba = np.sum(dtanh, keepdims=True, axis=-1)

gradients = {"dxt": dxt, "da_prev": da_prev, "dWax": dWax, "dWaa": dWaa, "dba": dba}

return gradients

# GRADED FUNCTION: rnn_forward

np.random.seed(1)

xt = np.random.randn(3, 10)

a_prev = np.random.randn(5, 10)

Wax = np.random.randn(5, 3)

Waa = np.random.randn(5, 5)

Wya = np.random.randn(2, 5)

ba = np.random.randn(5, 1)

by = np.random.randn(2, 1)

parameters = {"Wax": Wax, "Waa": Waa, "Wya": Wya, "ba": ba, "by": by}

a_next, yt, cache = rnn_cell_forward(xt, a_prev, parameters)

da_next = np.random.randn(5, 10)

gradients = rnn_cell_backward(da_next, cache)

print("gradients[\"dxt\"][1][2] =", gradients["dxt"][1][2])

print("gradients[\"dxt\"].shape =", gradients["dxt"].shape)

print("gradients[\"da_prev\"][2][3] =", gradients["da_prev"][2][3])

print("gradients[\"da_prev\"].shape =", gradients["da_prev"].shape)

print("gradients[\"dWax\"][3][1] =", gradients["dWax"][3][1])

print("gradients[\"dWax\"].shape =", gradients["dWax"].shape)

print("gradients[\"dWaa\"][1][2] =", gradients["dWaa"][1][2])

print("gradients[\"dWaa\"].shape =", gradients["dWaa"].shape)

print("gradients[\"dba\"][4] =", gradients["dba"][4])

print("gradients[\"dba\"].shape =", gradients["dba"].shape)

gradients["dxt"][1][2] = -0.4605641030588796

gradients["dxt"].shape = (3, 10)

gradients["da_prev"][2][3] = 0.08429686538067724

gradients["da_prev"].shape = (5, 10)

gradients["dWax"][3][1] = 0.39308187392193034

gradients["dWax"].shape = (5, 3)

gradients["dWaa"][1][2] = -0.28483955786960663

gradients["dWaa"].shape = (5, 5)

gradients["dba"][4] = [0.80517166]

gradients["dba"].shape = (5, 1)

# GRADED FUNCTION: rnn_forward

def rnn_forward(x, a0, parameters):

caches = []

n_x, m, T_x = x.shape

n_y, n_a = parameters["Wya"].shape

a = np.zeros((n_a, m, T_x))

y_pred = np.zeros((n_y, m, T_x))

a_next = a0

for t in range(T_x):

a_next, yt_pred, cache = rnn_cell_forward(x[:, :, t], a_next, parameters)

a[:, :, t] = a_next

y_pred[:, :, t] = yt_pred

caches.append(cache)

caches = (caches, x)

return a, y_pred, caches

np.random.seed(1)

x = np.random.randn(3, 10, 4)

a0 = np.random.randn(5, 10)

Waa = np.random.randn(5, 5)

Wax = np.random.randn(5, 3)

Wya = np.random.randn(2, 5)

ba = np.random.randn(5, 1)

by = np.random.randn(2, 1)

parameters = {"Waa": Waa, "Wax": Wax, "Wya": Wya, "ba": ba, "by": by}

a, y_pred, caches = rnn_forward(x, a0, parameters)

print("a[4][1] = ", a[4][1])

print("a.shape = ", a.shape)

print("y_pred[1][3] =", y_pred[1][3])

print("y_pred.shape = ", y_pred.shape)

print("caches[1][1][3] =", caches[1][1][3])

print("len(caches) = ", len(caches))

?用numpy和pytorh去实现反向传播算子,并且二者对比

class RNNCell:

def __init__(self, weight_ih, weight_hh,

bias_ih, bias_hh):

self.weight_ih = weight_ih

self.weight_hh = weight_hh

self.bias_ih = bias_ih

self.bias_hh = bias_hh

self.x_stack = []

self.dx_list = []

self.dw_ih_stack = []

self.dw_hh_stack = []

self.db_ih_stack = []

self.db_hh_stack = []

self.prev_hidden_stack = []

self.next_hidden_stack = []

# temporary cache

self.prev_dh = None

def __call__(self, x, prev_hidden):

self.x_stack.append(x)

next_h = np.tanh(

np.dot(x, self.weight_ih.T)

+ np.dot(prev_hidden, self.weight_hh.T)

+ self.bias_ih + self.bias_hh)

self.prev_hidden_stack.append(prev_hidden)

self.next_hidden_stack.append(next_h)

# clean cache

self.prev_dh = np.zeros(next_h.shape)

return next_h

def backward(self, dh):

x = self.x_stack.pop()

prev_hidden = self.prev_hidden_stack.pop()

next_hidden = self.next_hidden_stack.pop()

d_tanh = (dh + self.prev_dh) * (1 - next_hidden ** 2)

self.prev_dh = np.dot(d_tanh, self.weight_hh)

dx = np.dot(d_tanh, self.weight_ih)

self.dx_list.insert(0, dx)

dw_ih = np.dot(d_tanh.T, x)

self.dw_ih_stack.append(dw_ih)

dw_hh = np.dot(d_tanh.T, prev_hidden)

self.dw_hh_stack.append(dw_hh)

self.db_ih_stack.append(d_tanh)

self.db_hh_stack.append(d_tanh)

return self.dx_list

if __name__ == '__main__':

np.random.seed(123)

torch.random.manual_seed(123)

np.set_printoptions(precision=6, suppress=True)

rnn_PyTorch = torch.nn.RNN(4, 5).double()

rnn_numpy = RNNCell(rnn_PyTorch.all_weights[0][0].data.numpy(),

rnn_PyTorch.all_weights[0][1].data.numpy(),

rnn_PyTorch.all_weights[0][2].data.numpy(),

rnn_PyTorch.all_weights[0][3].data.numpy())

nums = 3

x3_numpy = np.random.random((nums, 3, 4))

x3_tensor = torch.tensor(x3_numpy, requires_grad=True)

h3_numpy = np.random.random((1, 3, 5))

h3_tensor = torch.tensor(h3_numpy, requires_grad=True)

dh_numpy = np.random.random((nums, 3, 5))

dh_tensor = torch.tensor(dh_numpy, requires_grad=True)

h3_tensor = rnn_PyTorch(x3_tensor, h3_tensor)

h_numpy_list = []

h_numpy = h3_numpy[0]

for i in range(nums):

h_numpy = rnn_numpy(x3_numpy[i], h_numpy)

h_numpy_list.append(h_numpy)

h3_tensor[0].backward(dh_tensor)

for i in reversed(range(nums)):

rnn_numpy.backward(dh_numpy[i])

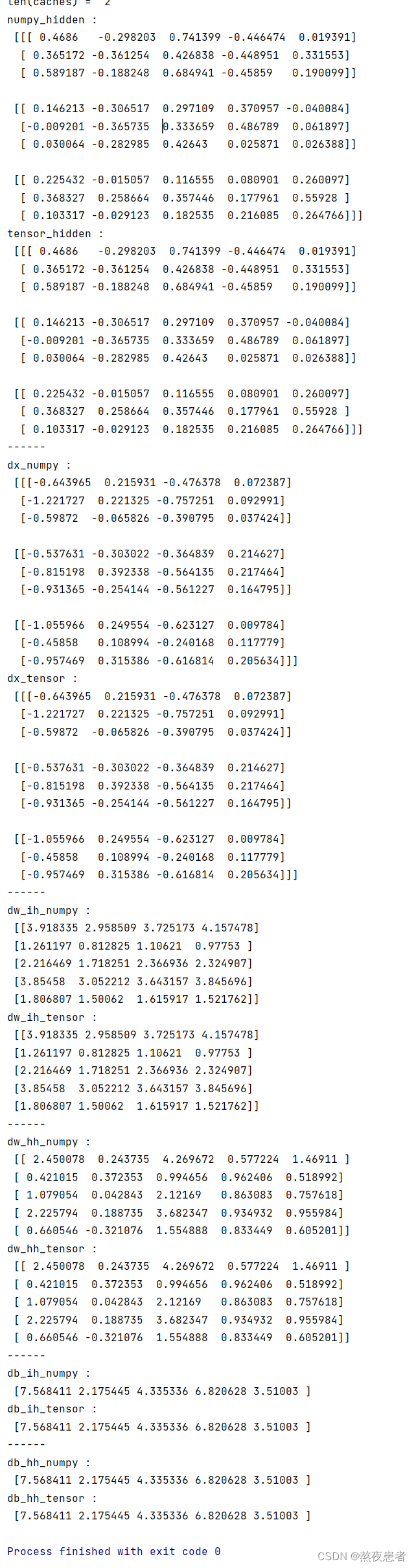

print("numpy_hidden :\n", np.array(h_numpy_list))

print("tensor_hidden :\n", h3_tensor[0].data.numpy())

print("------")

print("dx_numpy :\n", np.array(rnn_numpy.dx_list))

print("dx_tensor :\n", x3_tensor.grad.data.numpy())

print("------")

print("dw_ih_numpy :\n",

np.sum(rnn_numpy.dw_ih_stack, axis=0))

print("dw_ih_tensor :\n",

rnn_PyTorch.all_weights[0][0].grad.data.numpy())

print("------")

print("dw_hh_numpy :\n",

np.sum(rnn_numpy.dw_hh_stack, axis=0))

print("dw_hh_tensor :\n",

rnn_PyTorch.all_weights[0][1].grad.data.numpy())

print("------")

print("db_ih_numpy :\n",

np.sum(rnn_numpy.db_ih_stack, axis=(0, 1)))

print("db_ih_tensor :\n",

rnn_PyTorch.all_weights[0][2].grad.data.numpy())

print("------")

print("db_hh_numpy :\n",

np.sum(rnn_numpy.db_hh_stack, axis=(0, 1)))

print("db_hh_tensor :\n",

rnn_PyTorch.all_weights[0][3].grad.data.numpy())

实验结果:numpy实现和torch实现结果基本一样?

总结

本次实验主要是围绕BPTT的手推和代码(举例子推我推的很明白,但是理论硬推的时候,数学的基础是真跟不上阿,有心无力害,但是课下多努力吧,这篇博客本人写的感觉不是很好,因为数学知识不太跟得上感觉很多东西力不从心,也不算真正写完了吧,博客之后会持续更新de)

首先对于RTRL和BPTT,对于两种的学习算法要明确推导的过程(虽然我还没特别明确,半知半解)

关于梯度爆炸,梯度消失,对我们来说不陌生了,怎么能尽可能减少他们两者对我们的危害,比如梯度爆炸可以采取权重衰减和梯度截断等等,要明确梯度消失可以增加非线性等等,对于增加非线性后的容量问题,引入门控机制,LSTM等等,都应该对这块的知识有一个完整的体系。

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!