kubernetes(三)

2024-01-07 23:56:42

1. k8s弹性伸缩

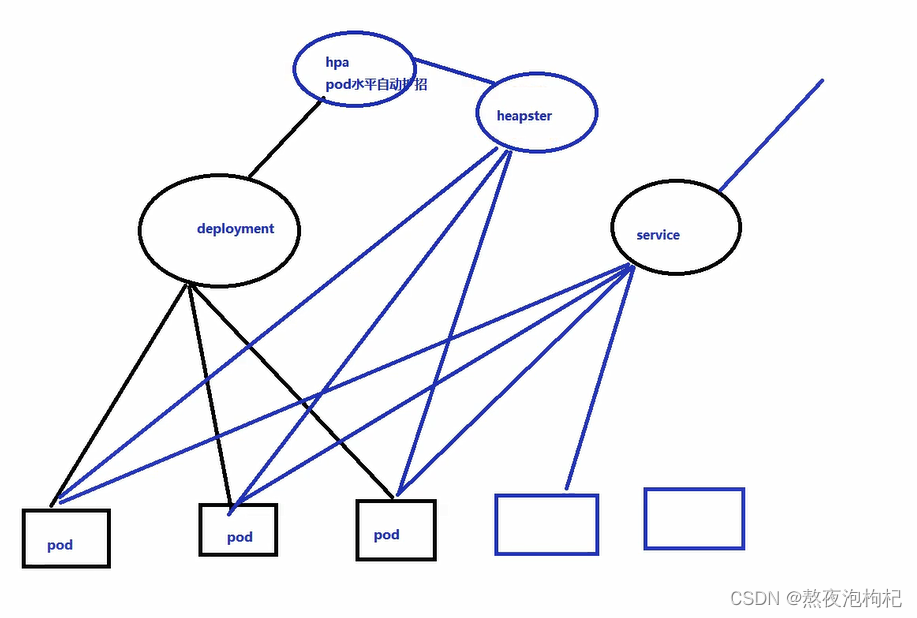

k8s弹性伸缩,需要附加插件heapster

1.1 安装heapster监控

使用heapster(低版本)可以监控pod压力大不大

使用hpa调节pod数量,自动扩容或者缩容

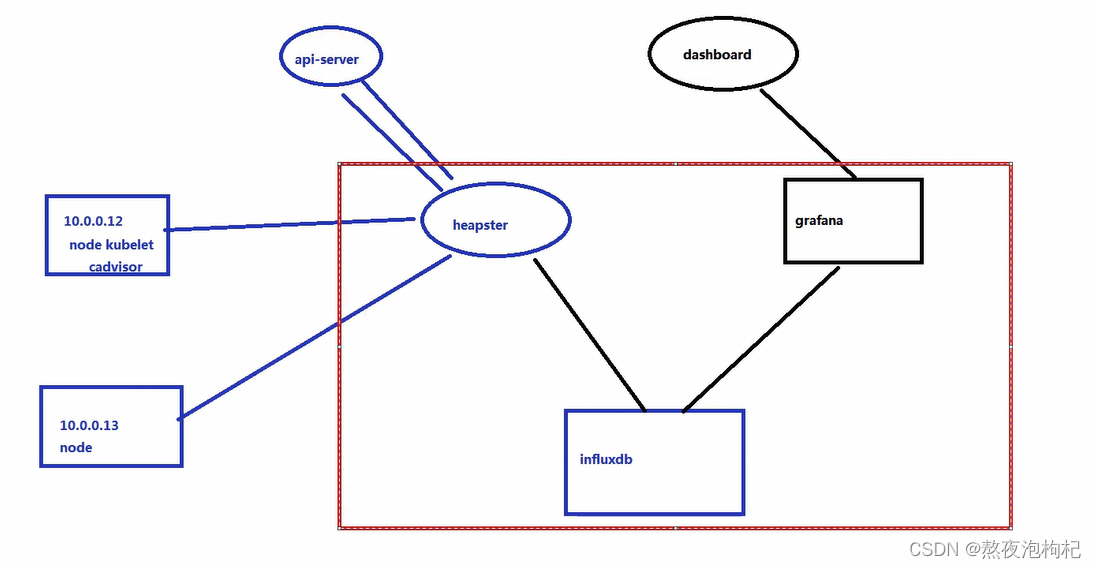

1.先去连接api-server,然后拿到node节点的信息

2. 再去node节点监控取值,把值存储到数据库

3. 然后用grafana出图,可以用dashboard调用

开始安装heapster监控:

批量导入镜像:

[root@k8s-node-2 heapster]# for image in `ls *.tar.gz`; do docker load -i $image; done

[root@k8s-node-2 heapster]# docker tag docker.io/kubernetes/heapster_grafana:v2.6.0 10.0.0.11:5000/heapster_grafana:v2.6.0

[root@k8s-node-2 heapster]# docker tag docker.io/kubernetes/heapster_influxdb:v0.5 10.0.0.11:5000/heapster_influxdb:v0.5

[root@k8s-node-2 heapster]# docker tag docker.io/kubernetes/heapster:canary 10.0.0.11:5000/heapster:canary

编写资源清单:

(1)"grafana-service.yaml"资源清单

[root@k8s-master heapster]# cat grafana-service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: kube-system

spec:

ports:

- port: 80

targetPort: 3000

selector:

name: influxGrafana

(2)"heapster-controller.yaml"资源清单

[root@k8s-master heapster]# cat heapster-controller.yaml

apiVersion: v1

kind: ReplicationController

metadata:

labels:

k8s-app: heapster

name: heapster

version: v6

name: heapster

namespace: kube-system

spec:

replicas: 1

selector:

k8s-app: heapster

version: v6

template:

metadata:

labels:

k8s-app: heapster

version: v6

spec:

nodeName: 10.0.0.13

containers:

- name: heapster

image: 10.0.0.11:5000/heapster:canary

imagePullPolicy: IfNotPresent

command:

- /heapster

- --source=kubernetes:http://10.0.0.11:8080?inClusterConfig=false

# monitoring-influxdb依赖dns配置,必须要安装k8s的dns

- --sink=influxdb:http://monitoring-influxdb:8086

(3)"heapster-service.yaml"资源清单

[root@k8s-master heapster]# cat heapster-service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: Heapster

name: heapster

namespace: kube-system

spec:

ports:

- port: 80

targetPort: 8082

selector:

k8s-app: heapster

(4)"influxdb-grafana-controller.yaml"清单

[root@k8s-master heapster]# cat influxdb-grafana-controller.yaml

apiVersion: v1

kind: ReplicationController

metadata:

labels:

name: influxGrafana

name: influxdb-grafana

namespace: kube-system

spec:

replicas: 1

selector:

name: influxGrafana

template:

metadata:

labels:

name: influxGrafana

spec:

nodeName: 10.0.0.13

containers:

- name: influxdb

image: 10.0.0.11:5000/heapster_influxdb:v0.5

volumeMounts:

- mountPath: /data

name: influxdb-storage

- name: grafana

image: 10.0.0.11:5000/heapster_grafana:v2.6.0

env:

- name: INFLUXDB_SERVICE_URL

value: http://monitoring-influxdb:8086

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

value: /api/v1/proxy/namespaces/kube-system/services/monitoring-grafana/

volumeMounts:

- mountPath: /var

name: grafana-storage

volumes:

- name: influxdb-storage

emptyDir: {}

- name: grafana-storage

emptyDir: {}

(5)"influxdb-service.yaml"资源清单

[root@k8s-master heapster]# cat influxdb-service.yaml

apiVersion: v1

kind: Service

metadata:

labels: null

name: monitoring-influxdb

namespace: kube-system

spec:

ports:

- name: http

port: 8083

targetPort: 8083

- name: api

port: 8086

targetPort: 8086

selector:

name: influxGrafana

# 进行验证

[root@k8s-master heapster]# kubectl apply -f .

[root@k8s-master heapster]# kubectl get all -n kube-system

## 多了heapter和grafana服务

[root@k8s-master heapster]# kubectl cluster-info

1.2 弹性伸缩使用和验证

(1) 编写一个deployment资源和svc资源实现访问

[root@k8s-master deployment]# cat 01-deploy.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nginx

spec:

replicas: 2

template:

metadata:

labels:

app: dk-nginx

spec:

containers:

- name: nginx

image: 10.0.0.11:5000/nginx:1.13

ports:

- containerPort: 80

resources:

limits:

cpu: 100m

memory: 50M

requests:

cpu: 100m

memory: 50M

[root@k8s-master svc]# cat k8s_svc.yaml

apiVersion: v1

kind: Service # 简称svc

metadata:

name: myweb

spec:

type: NodePort # 默认类型为ClusterIP

ports:

- port: 80 # clusterVIP端口

nodePort: 30000 # 指定基于NodePort类型对外暴露的端口,若不指定,则会在"30000-32767"端口访问内随机挑选一个未监听的端口暴露

targetPort: 80 # 指定后端Pod服务监听的端口

selector:

app: ycy-web # 指定匹配的Pod对应的标签

(2)生成一个hpa资源

# 1. 命令行的形式生成,在内存中有hpa的yaml,--max:指定最大的Pod数量, --min:指定最小的Pod数量, --cpu-percent:指定CPU的百分比

[root@k8s-master k8s_yaml]# kubectl autoscale deployment nginx --max=10 --min=1 --cpu-percent=10

# 2. 导出刚刚设置的hpa的yaml

[root@k8s-master k8s_yaml]# kubectl get hpa nginx -o yaml

apiVersion: autoscaling/v1

kind: HorizontalPodAutoscaler

metadata:

name: nginx

namespace: default

spec:

maxReplicas: 10

minReplicas: 1

scaleTargetRef:

apiVersion: extensions/v1beta1

kind: Deployment

name: nginx

targetCPUUtilizationPercentage: 10

# (3) 查看hpa资源

[root@k8s-master k8s_yaml]# kubectl get all

[root@k8s-master k8s_yaml]# kubectl get rs -o wide

[root@k8s-master k8s_yaml]# kubectl get hpa nginx -o yaml

[root@k8s-master k8s_yaml]# kubectl describe hpa nginx

[root@k8s-master k8s_yaml]# kubectl describe deployment nginx

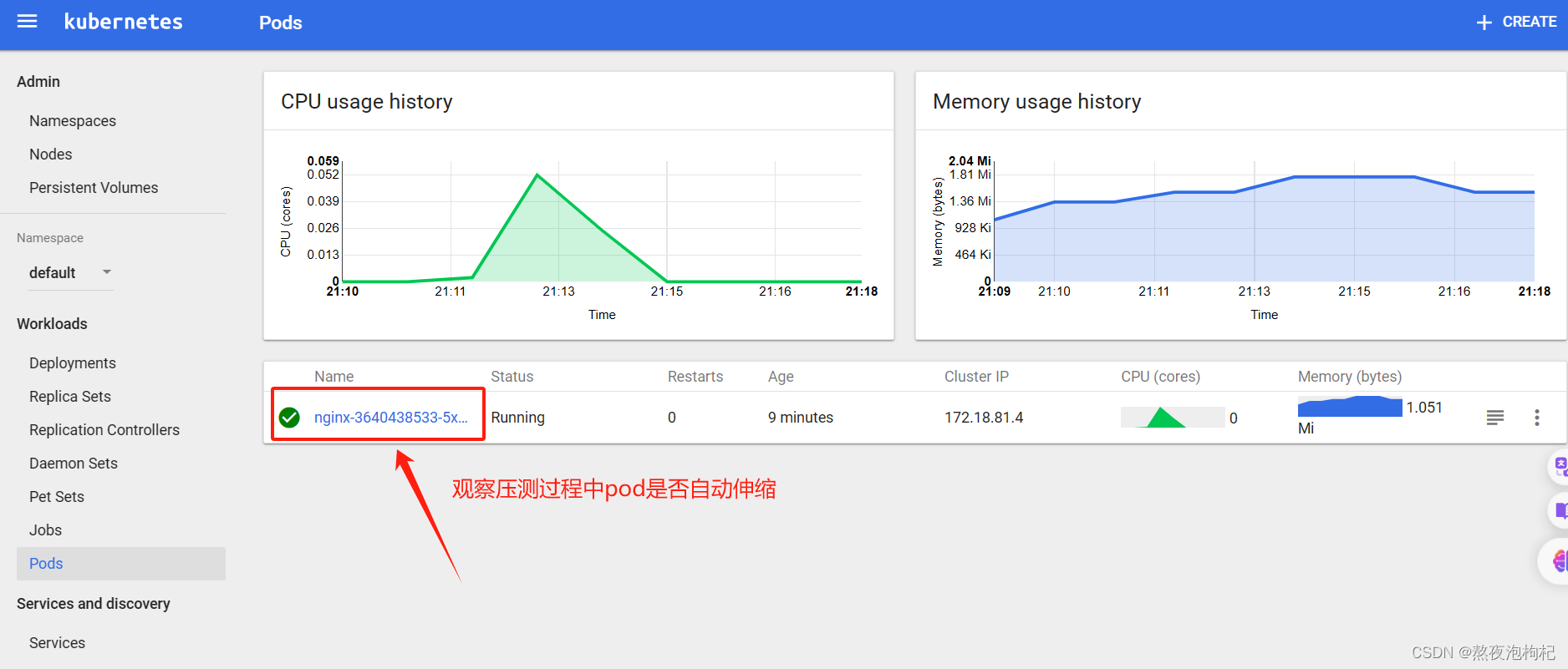

(3) 进行压测,验证是否自动伸缩

# 1. 安装ab压测工具

[root@k8s-master k8s_yaml]# yum install httpd-tools -y

# 2. -n 发起请求数 -c 并发数

[root@k8s-master k8s_yaml]# ab -n 10000 -c 2000 http://10.0.0.12:30148/

常用命令:

kubectl get all -n kube-system

kubectl cluster-info

# 查看pod的资源使用情况

kubectl top -n default pod test-32rf5

# 删除对应的资源

kubectl delete rc --all

kubectl delete deploment --all

kubectl delete daemonset --all

2. 持久化存储

tomcat+mysql的案例中,Pod成功后可以添加自定义的数据,数据被存储在MySQL数据库实例中,批量删除容器,docker rm -f `docker ps -a -q,删除容器后,这些容器会被K8S重新创建,尽管业务恢复了,但我们的数据也丢失了。为了解决容器被删除后数据不丢失,则引入了存储类型,类似于docker中的数据卷

提示:以通过kubectl exec -it mysql-698607359-dvmzk bash进入到容器并通过"env"查看MySQL的root用户密码

数据卷的分类:

数据卷:

(1)kubernetes中的Volume提供了在容器中挂载外部存储的能力;

(2)Pod需要设置卷来源(po.spec.volumes)和挂载点(po.spec.containers.volumeMounts)两个信息后才可以使用相应的volume;

数据卷类型大致分类:

本地数据卷:

hostPath,emptyDir等。

网络数据卷:

NFS,Ceph,GlusterFS等。

公有云:

AWS,EBS等;

K8S资源:

configmap,secret等。

官方文档: https://kubernetes.io/docs/concepts/storage/volumes/

2.1 emptyDir

查看帮助文档 kubectl explain pod.spec.containers.volumeMounts

emptyDir 临时的空目录,随着pod的生命周期,删掉pod,空目录内的内容也会小时,想实现数据共享就不能使用emptyDir资源,deploment 设置了三个pod,那么每个pod都会生成一个empty,所以多个Pod不能使用该类型进行数据共享,但同一个Pod的多个容器可以基于该类型进行数据共享。

使用场景:存放日志,pod与pod之间empty挂载不同的数据

spec:

nodeName: 10.0.0.13

volumes:

- name: mysql-data

emptyDir: {}

containers:

- name: wp-mysql

image: 10.0.0.11:5000/mysql:5.7

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3306

volumeMounts:

- name: mysql-data

mountPath: /var/lib/mysql

文章来源:https://blog.csdn.net/weixin_46818279/article/details/135383707

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!