集群部署1.27.4(高可用)

2023-12-23 20:56:31

一、简介

二、环境准备

| Hosts | IP |

|---|---|

| master01 | 172.16.100.21 |

| master02 | 172.16.100.22 |

| master03 | 172.16.100.23 |

| node01 | 172.16.100.11 |

| node02 | 172.16.100.12 |

VIP:172.16.100.21

Service虚拟IP地址段:10.0.0.0/24

kubernetes内网地址:10.244.0.0/16

三、初始化

3.1 配置hosts

cat >> /etc/hosts <<EOF

172.16.100.11 node01

172.16.100.12 node02

172.16.100.21 master01

172.16.100.22 master02

172.16.100.23 master03

EOF

3.2 master01对所有节点免密

sshd-keygen

ssh-copy-id master01

ssh-copy-id master02

ssh-copy-id master03

ssh-copy-id node01

ssh-copy-id node02

3.3 安装所需依赖包

yum -y install wget jq psmisc vim net-tools nfs-utils telnet yum-utils device-mapper-persistent-data lvm2 git network-scripts tar curl ipvsadm ipset sysstat conntrack libseccomp

3.4 关闭防火墙、SElinux、swap交换分区

# 关闭防火墙 与selinux

systemctl disable --now firewalld

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

# 关闭交换分区

sed -ri 's/.*swap.*/#&/' /etc/fstab

swapoff -a && sysctl -w vm.swappiness=0

3.5 配置系统句柄数

ulimit -SHn 65535

cat >> /etc/security/limits.conf <<EOF

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* seft memlock unlimited

* hard memlock unlimitedd

EOF

3.6 配置ipvs.conf

mkdir /etc/modules-load.d/

cp /etc/modules-load.d/ipvs.conf /etc/modules-load.d/ipvs.conf.bak

cat > /etc/modules-load.d/ipvs.conf <<EOF

ipip

ip_set

ip_tables

ipt_REJECT

ipt_rpfilter

ipt_set

ip_vs

ip_vs_dh

ip_vs_fo

ip_vs_ftp

ip_vs_lblc

ip_vs_lblcr

ip_vs_lc

ip_vs_nq

ip_vs_rr

ip_vs_sed

ip_vs_sh

ip_vs_wlc

ip_vs_wrr

nf_conntrack

xt_set

EOF

systemctl restart systemd-modules-load.service

lsmod | grep -e ip_vs -e nf_conntrack

3.7 修改内核参数

cat > /etc/sysctl.d/k8s.conf <<EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

vm.overcommit_memory = 1

vm.panic_on_oom = 0

fs.inotify.max_user_watches = 89100

fs.file-max = 52706963

fs.nr_open = 52706963

net.netfilter.nf_conntrack_max = 2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl = 15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

net.ipv6.conf.all.disable_ipv6 = 0

net.ipv6.conf.default.disable_ipv6 = 0

net.ipv6.conf.lo.disable_ipv6 = 0

net.ipv6.conf.all.forwarding = 1

EOF

sysctl --system

四、高可用搭建(keeplived、haproxy)

4.1 keeplived

4.1.1 安装keeplived

yum -y install keepalived

4.1.2 配置keeplived文件

#! Configuration File for keepalived

global_defs {

router_id directory1 #只是名字而已,辅节点改为directory2(两个名字一定不能一样)

}

vrrp_instance VI_1 {

state MASTER #定义主还是备,备用的话写backup

interface ens192 #VIP绑定接口

virtual_router_id 80 #整个集群的调度器一致(在同一个集群)

priority 100 #(优先权)back改为50(50一间隔)

advert_int 1 #发包

authentication {

auth_type PASS #主备节点认证

auth_pass 1111

}

virtual_ipaddress {

172.16.100.20/24 #VIP(自己网段的)

}

}

4.1.3 启动

systemctl restart keeplived

systemctl enable keeplived

systemctl status keeplived

4.2 HAproxy

4.2.1 安装HAproxy

yum -y install HAproxy

4.2.2 配置HAproxy文件

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

stats socket /var/lib/haproxy/stats

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option forwardfor except 127.0.0.0/8

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 3000

frontend main *:5000

acl url_static path_beg -i /static /images /javascript /stylesheets

acl url_static path_end -i .jpg .gif .png .css .js

use_backend static if url_static

default_backend k8s-master

backend static

balance roundrobin

server static 127.0.0.1:6443 check

backend k8s-master

balance roundrobin

server master01 172.16.100.21:6443 check

server master02 172.16.100.22:6443 check

server master03 172.16.100.23:6443 check

4.1.3 启动

systemctl restart haproxy

systemctl enable haproxy

systemctl status haproxy

五、containerd部署

5.1 加载 containerd模块

cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF

systemctl restart systemd-modules-load.service

cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

# 加载内核

sysctl --system

5.2 yum安装container

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

查看YUM源中Containerd软件

yum list | grep containerd

下载安装:

yum install -y containerd.io

#生成containerd的配置文件

mkdir /etc/containerd -p

#生成配置文件

containerd config default > /etc/containerd/config.toml

#编辑配置文件

sed -i "s/SystemdCgroup = .*/SystemdCgroup = true/" /etc/containerd/config.toml

sed -i 's#sandbox_image = .*#sandbox_image = \"registry.aliyuncs.com/google_containers/pause:3.6\"#' /etc/containerd/config.toml

systemctl enable containerd

systemctl restart containerd

ctr version

runc -version

5.3 安装crictl

tar -zxf crictl-v1.27.0-linux-amd64.tar.gz && mv crictl /usr/bin

cat > /etc/crictl.yaml << EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

EOF

六、etcd部署

6.1 生成证书

mkdir -p ~/TLS/{etcd,k8s}

cd ~/TLS/etcd

#自签CA:

cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"www": {

"expiry": "876000h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json << EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Guangdong",

"ST": "Guangdong"

}

]

}

EOF

#生成证书:

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#会生成ca.pem和ca-key.pem文件

#使用自签CA签发Etcd HTTPS证书

#创建证书申请文件:

cat > server-csr.json << EOF

{

"CN": "etcd",

"hosts": [

"172.16.100.20",

"172.16.100.21",

"172.16.100.22",

"172.16.100.23"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Guangdong",

"ST": "Guangdong"

}

]

}

EOF

#注:上述文件hosts字段中IP为所有etcd节点的集群内部通信IP,一个都不能少!为了方便后期扩容可以多写几个预留的IP。

#生成证书:

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

#会生成server.pem和server-key.pem文件。

6.2 解压安装包并传输

1. Etcd 的概念:

Etcd 是一个分布式键值存储系统,Kubernetes使用Etcd进行数据存储,所以先准备一个Etcd数据库,为解决Etcd单点故障,应采用集群方式部署,这里使用3台组建集群,可容忍1台机器故障,当然,你也可以使用5台组建集群,可容忍2台机器故障。

下载地址: https://github.com/etcd-io/etcd/releases

以下在节点pc1上操作

2. 安装配置etcd

mkdir /opt/etcd/{bin,cfg,ssl} -p

tar zxvf etcd-v3.5.8-linux-amd64.tar.gz

mv etcd-v3.5.8-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

6.3 etcd配置文件

######不同节点更改ETCD_NAME、ETCD_LISTEN_PEER_URLS、ETCD_LISTEN_CLIENT_URLS、ETCD_INITIAL_ADVERTISE_PEER_URLS、ETCD_ADVERTISE_CLIENT_URLS

#pc-1 etcd 配置文件

cat > /opt/etcd/cfg/etcd.conf << EOF

#[Member]

ETCD_NAME="etcd-1"

ETCD_DATA_DIR="/opt/etcd/data"

ETCD_LISTEN_PEER_URLS="http://172.16.100.21:2380"

ETCD_LISTEN_CLIENT_URLS="http://172.16.100.21:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://172.16.100.21:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://172.16.100.21:2379"

ETCD_INITIAL_CLUSTER="etcd-1=http://172.16.100.21:2380,etcd-2=http://172.16.100.22:2380,etcd-3=http://172.16.100.23:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

6.4 拷贝证书

#拷贝刚才生成的证书

#把刚才生成的证书拷贝到配置文件中的路径:

cp ~/TLS/etcd/ca*pem ~/TLS/etcd/server*pem /opt/etcd/ssl/

6.5 systemd管理etcd

cat > /usr/lib/systemd/system/etcd.service << EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd.conf

ExecStart=/opt/etcd/bin/etcd \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem \

--logger=zap

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

#启动etcd:

systemctl daemon-reload

systemctl restart etcd

systemctl enable etcd

6.6 检查

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="http://172.16.100.21:2379,http://172.16.100.22:2379,http://172.16.100.23:2379" endpoint health --write-out=table

七、kubernetes组件部署(1.27.x)

7.1 部署kube-apiserver(master)

7.1.1生成证书(master01)

cd ~/TLS/k8s

cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"expiry": "876000h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json << EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Guangdong",

"ST": "Guangdong",

"O": "k8s",

"OU": "System"

}

]

}

EOF

#生成证书:

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#会生成ca.pem和ca-key.pem文件。

#使用自签CA签发kube-apiserver HTTPS证书

#创建证书申请文件:

cat > server-csr.json << EOF

{

"CN": "kubernetes",

"hosts": [

"172.16.100.20",

"172.16.100.21",

"172.16.100.22",

"172.16.100.23",

"10.0.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Guangdong",

"ST": "Guangdong",

"O": "k8s",

"OU": "System"

}

]

}

EOF

#注:上述文件hosts字段中IP为所有Master/LB/VIP IP,一个都不能少!为了方便后期扩容可以多写几个预留的IP。

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

#会生成server.pem和server-key.pem文件。

7.1.2 解压copy文件到安装路径

cd ~/k8s_file

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

tar -zxf kubernetes-server-linux-amd64.tar.gz

cd kubernetes/server/bin

cp kube-apiserver kube-scheduler kube-controller-manager /opt/kubernetes/bin

cp kubectl /usr/bin/

7.1.3 kube-apiserver配置文件

###不同得masterIP需要更改–bind-address、–advertise-address

cat > /opt/kubernetes/cfg/kube-apiserver.conf << EOF

-----

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--v=2 \

--etcd-servers=http://172.16.100.21:2379,http://172.16.100.22:2379,http://172.16.100.23:2379 \

--bind-address=172.16.100.21 \

--secure-port=6443 \

--advertise-address=172.16.100.21 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth=true \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-32767 \

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--service-account-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \

--proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \

--proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \

--requestheader-allowed-names=kubernetes \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-group-headers=X-Remote-Group \

--requestheader-username-headers=X-Remote-User \

--enable-aggregator-routing=true \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

------

EOF

注:上面两个\ \ 第一个是转义符,第二个是换行符,使用转义符是为了使用EOF保留换行符。

? ---v:日志等级

? --etcd-servers:etcd集群地址

? --bind-address:监听地址

? --secure-port:https安全端口

? --advertise-address:集群通告地址

? --allow-privileged:启用授权

? --service-cluster-ip-range:Service虚拟IP地址段

? --enable-admission-plugins:准入控制模块

? --authorization-mode:认证授权,启用RBAC授权和节点自管理

? --enable-bootstrap-token-auth:启用TLS bootstrap机制

? --token-auth-file:bootstrap token文件

? --service-node-port-range:Service nodeport类型默认分配端口范围

? --kubelet-client-xxx:apiserver访问kubelet客户端证书

? --tls-xxx-file:apiserver https证书

? 1.20版本必须加的参数:--service-account-issuer,--service-account-signing-key-file

? --etcd-xxxfile:连接Etcd集群证书

? --audit-log-xxx:审计日志

? 启动聚合层相关配置:--requestheader-client-ca-file,--proxy-client-cert-file,--proxy-client-key-file,--requestheader-allowed-names,--requestheader-extra-headers-prefix,--requestheader-group-headers,--requestheader-username-headers,--enable-aggregator-routing

7.1.4 拷贝证书

#拷贝刚才生成的证书

#把刚才生成的证书拷贝到配置文件中的路径:

cp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem /opt/kubernetes/ssl/

scp ~/TLS/k8s/* master02:/root/TLS/k8s/

scp ~/TLS/k8s/* master03:/root/TLS/k8s/

scp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem master02:/opt/kubernetes/ssl/

scp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem master03:/opt/kubernetes/ssl/

7.1.5 启用TLS通信

#启用 TLS Bootstrapping 机制

TLS Bootstraping:Master apiserver启用TLS认证后,Node节点kubelet和

kube-proxy要与kube-apiserver进行通信,必须使用CA签发的有效证书才可以,

当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。

为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书,

kubelet会以一个低权限用户自动向apiserver申请证书,

kubelet的证书由apiserver动态签署。

所以强烈建议在Node上使用这种方式,目前主要用于kubelet,kube-proxy

还是由我们统一颁发一个证书。

创建上述配置文件中token文件:

cat > /opt/kubernetes/cfg/token.csv << EOF

6e5339f4a66f3af96f8d0d683681cf41,kubelet-bootstrap,10001,"system:node-bootstrapper"

EOF

格式:token,用户名,UID,用户组

token也可自行生成替换:

head -c 16 /dev/urandom | od -An -t x | tr -d ' '

7.1.6 systemd管理Apiserver

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

#启动并设置开机启动

systemctl daemon-reload

systemctl restart kube-apiserver

systemctl enable kube-apiserver

7.1.7 检查

netstat -tnlp | grep kube-apiserver

curl https://IP/

7.2 部署kube-controller-manager(master)

7.2.1 生成证书(所有master)

#生成kubeconfig文件

#生成kube-controller-manager证书:

# 切换工作目录

cd ~/TLS/k8s

# 创建证书请求文件

cat > kube-controller-manager-csr.json << EOF

{

"CN": "system:kube-controller-manager",

"hosts": [""],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Guangdong",

"ST": "Guangdong",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

# 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

7.2.2 kube-controller-manager配置文件

#部署kube-controller-manager

#1. 创建配置文件

cat > /opt/kubernetes/cfg/kube-controller-manager.conf << EOF

KUBE_CONTROLLER_MANAGER_OPTS=" \\

--v=2 \\

--leader-elect=true \\

--kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \\

--bind-address=127.0.0.1 \\

--allocate-node-cidrs=true \\

--cluster-cidr=10.244.0.0/16 \\

--service-cluster-ip-range=10.0.0.0/24 \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--cluster-signing-duration=87600h0m0s"

EOF

?--kubeconfig:连接apiserver配置文件

?--leader-elect:当该组件启动多个时,自动选举(HA)

?--cluster-signing-cert-file/--cluster-signing-key-file:自动为kubelet颁发证书的CA,与apiserver保持一致

7.2.3 生成kubeconfig文件

###需要修改KUBE_APISERVER的IP地址

#生成kubeconfig文件(以下是shell命令,直接在终端执行):

KUBE_CONFIG="/opt/kubernetes/cfg/kube-controller-manager.kubeconfig"

KUBE_APISERVER="https://172.16.100.23:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-controller-manager \

--client-certificate=./kube-controller-manager.pem \

--client-key=./kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-controller-manager \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

7.2.4 systemd管理controller-manager

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

#启动并设置开机启动

systemctl daemon-reload

systemctl restart kube-controller-manager

systemctl enable kube-controller-manager

7.2.5 检查

netstat -tnlp | grep kube-controlle

7.3 部署kube-scheduler(master01)

7.3.1 生成证书

#生成kubeconfig文件

#生成kube-scheduler证书:

# 切换工作目录

cd ~/TLS/k8s

# 创建证书请求文件

cat > kube-scheduler-csr.json << EOF

{

"CN": "system:kube-scheduler",

"hosts": [""],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Guangdong",

"ST": "Guangdong",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

# 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

7.3.2 kube-scheduler配置文件

#部署kube-scheduler

#1. 创建配置文件

cat > /opt/kubernetes/cfg/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS=" \\

--v=2 \\

--leader-elect \\

--kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \\

--bind-address=127.0.0.1"

EOF

?--kubeconfig:连接apiserver配置文件

?--leader-elect:当该组件启动多个时,自动选举(HA)

7.3.3 生成kubeconfig文件

###需要修改KUBE_APISERVER的IP地址

#生成kubeconfig文件:

KUBE_CONFIG="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"

KUBE_APISERVER="https://172.16.100.21:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-scheduler \

--client-certificate=./kube-scheduler.pem \

--client-key=./kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-scheduler \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

7.3.4 systemd管理scheduler

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

#启动并设置开机启动

systemctl daemon-reload

systemctl restart kube-scheduler

systemctl enable kube-scheduler

7.3.5 检查

netstat -tnlp | grep kube-scheduler

7.4 部署kubectl(master)

7.4.1 生成证书(所有master)

#生成kubectl连接集群的证书:

cd /root/TLS/k8s/

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [""],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Guangdong",

"ST": "Guangdong",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

7.4.2 生成kubeconfig文件

###需要修改KUBE_APISERVER的IP地址

mkdir /root/.kube

KUBE_CONFIG="/root/.kube/config"

KUBE_APISERVER="https://172.16.100.21:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials cluster-admin \

--client-certificate=./admin.pem \

--client-key=./admin-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=cluster-admin \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

7.4.3 查看集群组件状态

kubectl get cs

#授权kubelet-bootstrap用户允许请求证书

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

7.5 部署kubelet

拷贝命令到/usr/bin

#创建工作目录并拷贝二进制文件

cd ~/k8s_file/

cd kubernetes/server/bin/

cp kubelet kube-proxy /opt/kubernetes/bin

7.5.1 kubelet配置文件

#####更改–hostname-override

cat > /opt/kubernetes/cfg/kubelet.conf << EOF

------

KUBELET_OPTS=" \

--v=2 \

--hostname-override=master01 \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet-config.yml \

--cert-dir=/opt/kubernetes/ssl \

--runtime-request-timeout=15m \

--container-runtime-endpoint=unix:///run/containerd/containerd.sock \

--cgroup-driver=systemd \

--node-labels=node.kubernetes.io/node=''"

------

EOF

7.5.2 配置kubelet-config.yml

cat > /opt/kubernetes/cfg/kubelet-config.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- 10.0.0.2

clusterDomain: cluster.local

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /opt/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

EOF

7.5.3 生成kubeconfig文件

#执行生成kubelet初次加入集群引导kubeconfig文件

KUBE_CONFIG="/opt/kubernetes/cfg/bootstrap.kubeconfig"

KUBE_APISERVER="https://172.16.100.23:6443"

#修改token

TOKEN="6e5339f4a66f3af96f8d0d683681cf41"

# 生成 kubelet bootstrap kubeconfig 配置文件

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials "kubelet-bootstrap" \

--token=${TOKEN} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user="kubelet-bootstrap" \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

7.5.4 systemd管理kubelet

cat > /usr/lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

#启动并设置开机启动

systemctl daemon-reload

systemctl restart kubelet

systemctl enable kubelet

7.5.6 检查

kubectl get csr

#授权

kubectl certificate approve $NAME

7.6 部署kube-proxy(master01)

7.6.1 生成证书

#生成kube-proxy.kubeconfig文件

# 切换工作目录

cd ~/TLS/k8s

# 创建证书请求文件

cat > kube-proxy-csr.json << EOF

{

"CN": "system:kube-proxy",

"hosts": [""],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Guangdong",

"ST": "Guangdong",

"O": "k8s",

"OU": "System"

}

]

}

EOF

# 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

7.6.2 kube-proxy配置文件

cat > /opt/kubernetes/cfg/kube-proxy.conf << EOF

KUBE_PROXY_OPTS=" \\

--v=2 \\

--config=/opt/kubernetes/cfg/kube-proxy-config.yml"

EOF

7.6.3 配置kube-proxy-config.yml

cat > /opt/kubernetes/cfg/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

metricsBindAddress: 0.0.0.0:10249

clientConnection:

kubeconfig: /opt/kubernetes/cfg/kube-proxy.kubeconfig

hostnameOverride: master01

clusterCIDR: 10.244.0.0/16

mode: ipvs

ipvs:

scheduler: "rr"

iptables:

masqueradeAll: true

EOF

7.6.4 生成kubeconfig文件

###需要修改KUBE_APISERVER的IP地址

KUBE_CONFIG="/opt/kubernetes/cfg/kube-proxy.kubeconfig"

KUBE_APISERVER="https://172.16.100.21:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-proxy \

--client-certificate=./kube-proxy.pem \

--client-key=./kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

7.6.5 systemd管理kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-proxy.conf

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

#启动并设置开机启动

systemctl daemon-reload

systemctl restart kube-proxy

systemctl enable kube-proxy

7.7 添加master

7.7.1 master上拷贝证书

scp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem master02:/opt/kubernetes/ssl/

7.7.2 启用TLS通信

#启用 TLS Bootstrapping 机制

TLS Bootstraping:Master apiserver启用TLS认证后,Node节点kubelet和

kube-proxy要与kube-apiserver进行通信,必须使用CA签发的有效证书才可以,

当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。

为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书,

kubelet会以一个低权限用户自动向apiserver申请证书,

kubelet的证书由apiserver动态签署。

所以强烈建议在Node上使用这种方式,目前主要用于kubelet,kube-proxy

还是由我们统一颁发一个证书。

创建上述配置文件中token文件:

cat > /opt/kubernetes/cfg/token.csv << EOF

06b377c47a2014d888f56a23504cd713,kubelet-bootstrap,10001,"system:node-bootstrapper"

EOF

格式:token,用户名,UID,用户组

token也可自行生成替换:

head -c 16 /dev/urandom | od -An -t x | tr -d ' '

7.7.3 kube-apiserver配置文件

cat > /opt/kubernetes/cfg/kube-apiserver.conf << EOF

-----

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--v=2 \

--etcd-servers=http://172.16.100.21:2379,http://172.16.100.22:2379,http://172.16.100.23:2379 \

--bind-address=172.16.100.22 \

--secure-port=6443 \

--advertise-address=172.16.100.22 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth=true \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-32767 \

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--service-account-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \

--proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \

--proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \

--requestheader-allowed-names=kubernetes \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-group-headers=X-Remote-Group \

--requestheader-username-headers=X-Remote-User \

--enable-aggregator-routing=true \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

------

EOF

7.7.4 systemd管理Apiserver

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

#启动并设置开机启动

systemctl daemon-reload

systemctl restart kube-apiserver

systemctl enable kube-apiserver

7.7.5 检查

7.8 添加work

7.8.1 拷贝文件到work节点(kubelet、kube-proxy)

scp -r /opt/kubernetes root@node01:/opt/

scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@node01:/usr/lib/systemd/system

scp -r /opt/kubernetes root@node02:/opt/

scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@node02:/usr/lib/systemd/system

7.8.2 更改node01

删除kubelet证书和kubeconfig文件

rm -rf /opt/kubernetes/cfg/kubelet.kubeconfig

rm -rf /opt/kubernetes/ssl/kubelet*

rm -rf /opt/kubernetes/logs/*

sed -i 's/master01/node01/' /opt/kubernetes/cfg/kubelet.conf

sed -i 's/master01/node01/' /opt/kubernetes/cfg/kube-proxy-config.yml

systemctl daemon-reload

systemctl restart kubelet kube-proxy

systemctl enable kubelet kube-proxy

7.8.3 更改node02

删除kubelet证书和kubeconfig文件

rm -rf /opt/kubernetes/cfg/kubelet.kubeconfig

rm -rf /opt/kubernetes/ssl/kubelet*

rm -rf /opt/kubernetes/logs/*

sed -i 's/master01/node02/' /opt/kubernetes/cfg/kubelet.conf

sed -i 's/master01/node02/' /opt/kubernetes/cfg/kube-proxy-config.yml

systemctl daemon-reload

systemctl restart kubelet kube-proxy

systemctl enable kubelet kube-proxy

7.9 在Master上批准kubelet证书申请

for kube in `kubectl get csr | grep node | awk '{print $1}'`

do

kubectl certificate approve $kube

done

#检查集群

kubectl get node

7.10 部署calico网络

网络组件有很多种,只需要部署其中一个即可,推荐Calico。

Calico是一个纯三层的数据中心网络方案,Calico支持广泛的平台,包括Kubernetes、OpenStack等。

Calico 在每一个计算节点利用 Linux Kernel 实现了一个高效的虚拟路由器( vRouter) 来负责数据转发,而每个 vRouter 通过 BGP 协议负责把自己上运行的 workload 的路由信息向整个 Calico 网络内传播。

此外,Calico 项目还实现了 Kubernetes 网络策略,提供ACL功能。

1.下载Calico

wget https://docs.tigera.io/archive/v3.25/manifests/calico.yaml

vim calico.yaml

...

- name: CALICO_IPV4POOL_CIDR

value: "10.244.0.0/16"

...

kubectl apply -f calico.yaml

kubectl get pod -n kube-system

kubectl get nodes

kubectl create clusterrolebinding kube-apiserver --clusterrole=cluster-admin --user=kube-apiserver

八、问题汇总

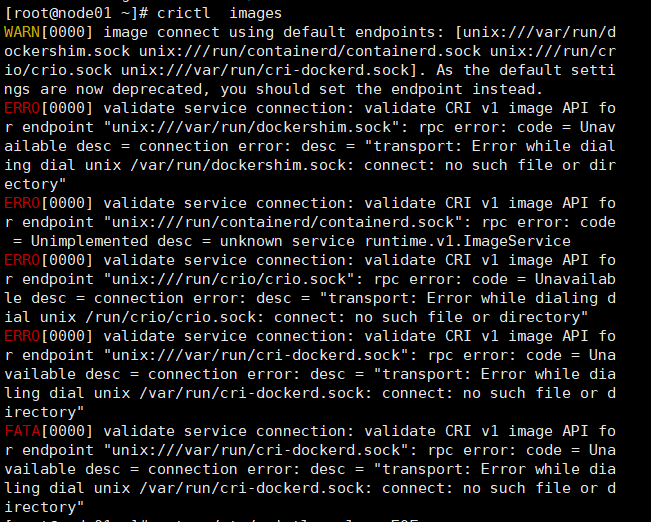

问题一、安装crictl后报错

解决方法:

cat > /etc/crictl.yaml << EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

EOF

问题二、全授权后使用kubectl get node只有一个节点

没有图片,kube-apiserver的问题,kube-apiserver证书需要master01复制到02、03才行,如果各生成一个证书使用,则会出现问题

文章来源:https://blog.csdn.net/weixin_44857388/article/details/135164602

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!