部署Kubernetes(k8s)集群,可视化部署kuboard

2023-12-13 04:06:52

所需机器

| 主机名 | 地址 | 角色 | 配置 |

|---|---|---|---|

| k8s-master | 192.168.231.134 | 主节点 | 2核4G,centos7 |

| k8s-node1 | 192.168.231.135 | 工作节点 | 2核4G,centos7 |

| k8s-node2 | 192.168.231.136 | 工作节点 | 2核4G,centos7 |

主节点CPU核数必须是 ≥2核且内存要求必须≥2G,否则k8s无法启动

1. 集群环境部署【三台机器都需要做的操作】

1.关闭防火墙与selinux

2.时间同步

yum -y install ntpdate

ntpdate ntp.aliyun.com

3.配置静态ip

4.本地域名解析

cat>>/etc/hosts<EOF

192.168.231.134 k8s-master

192.168.231.135 k8s-node1

192.168.231.136 k8s-node2

EOF

5.关闭swap分区,Kubernetes 1.8开始要求关闭系统的Swap,如果不关闭,默认配置下kubelet将无法启动。

# swapoff -a

修改/etc/fstab文件,注释掉SWAP的自动挂载,使用free -m确认swap已经关闭。

2.注释掉swap分区:

# sed -i 's/.*swap.*/#&/' /etc/fstab

# free -m

total used free shared buff/cache available

Mem: 3935 144 3415 8 375 3518

Swap: 0 0 02. 集群下载docker

配置阿里云Docker Yum源

# yum install -y yum-utils device-mapper-persistent-data lvm2 git

# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

安装docker最新版本

# yum install docker-ce -y

启动Docker服务,并设置开机自启:

#systemctl enable docker

#systemctl start docker3. 向docker导入镜像【集群】

这里我们是已经有所需要的镜像包,因此直接导入

解压俩个包

# tar xf application.tar.xz

# tar xf kube-1.22.0.tar.xz

[root@k8s-master ~]# ls

anaconda-ks.cfg application application.tar.xz calico.yaml kube-1.22.0 kube-1.22.0.tar.xz

[root@k8s-master ~]# cd application/

[root@k8s-master application]# ls

images image.sh

###执行导入镜像脚本

[root@k8s-master application]# sh image.sh load

[root@k8s-master ~]# cd kube-1.22.0/

[root@k8s-master kube-1.22.0]# ls

get-docker-image.sh images

###执行导入镜像脚本

[root@k8s-master kube-1.22.0]# sh get-docker-image.sh load特别注意

所有机器都必须有镜像

每次部署都会有版本更新,具体版本要求,运行初始化过程失败会有版本提示

kubeadm的版本和镜像的版本必须是对应的

4. 安装Kubeadm包[集群]

配置阿里云源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF所有节点:

1.安装依赖包及常用软件包

# yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wget vim net-tools git iproute lrzsz bash-completion tree bridge-utils unzip bind-utils gcc

2.安装对应版本

# yum install -y kubelet-1.22.0-0.x86_64 kubeadm-1.22.0-0.x86_64 kubectl-1.22.0-0.x86_64

3.加载ipvs相关内核模块

# cat <<EOF > /etc/modules-load.d/ipvs.conf

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack_ipv4

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

4.配置:

配置转发相关参数,否则可能会出错

# cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF此时执行是否加载成功命令是没有显示的

# lsmod | grep ip_vs5.配置启动集群

再次之前重启三台服务器

# reboot配置变量:

[root@k8s-master ~]# DOCKER_CGROUPS=`docker info |grep 'Cgroup' | awk ' NR==1 {print $3}'`

[root@k8s-master ~]# echo $DOCKER_CGROUPS

systemd配置kubelet的cgroups

# cat >/etc/sysconfig/kubelet<<EOF

KUBELET_EXTRA_ARGS="--cgroup-driver=$DOCKER_CGROUPS --pod-infra-container-image=k8s.gcr.io/pause:3.5"

EOF启动

# systemctl daemon-reload

# systemctl enable kubelet && systemctl restart kubelet注意:

在这里使用 # systemctl status kubelet,你会发现报错误信息;

10月 11 00:26:43 node1 systemd[1]: kubelet.service: main process exited, code=exited, status=255/n/a

10月 11 00:26:43 node1 systemd[1]: Unit kubelet.service entered failed state.

10月 11 00:26:43 node1 systemd[1]: kubelet.service failed.

运行 # journalctl -xefu kubelet 命令查看systemd日志才发现,真正的错误是:

unable to load client CA file /etc/kubernetes/pki/ca.crt: open /etc/kubernetes/pki/ca.crt: no such file or directory

#这个错误在运行kubeadm init 生成CA证书后会被自动解决,此处可先忽略。

#简单地说就是在kubeadm init 之前kubelet会不断重启。6. 配置master节点【master做】

运行初始化过程如下:

[root@kub-k8s-master]# kubeadm init --kubernetes-version=v1.22.0 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.231.134

注:

apiserver-advertise-address=192.168.231.134 ---master的ip地址。

--kubernetes-version=v1.22.0 --更具具体版本进行修改执行完会输出一大堆信息,注意最后的一段

....

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config ######这三条命令需要执行

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.231.134:6443 --token 93erio.hbn2ti6z50he0lqs \

--discovery-token-ca-cert-hash sha256:3bc60f06a19bd09f38f3e05e5cff4299011b7110ca3281796668f4edb29a56d9 #需要记住上面记录了完成的初始化输出的内容,根据输出的内容基本上可以看出手动初始化安装一个Kubernetes集群所需要的关键步骤。

其中有以下关键内容:

? ? [kubelet] 生成kubelet的配置文件”/var/lib/kubelet/config.yaml”

? ? [certificates]生成相关的各种证书

? ? [kubeconfig]生成相关的kubeconfig文件

? ? [bootstraptoken]生成token记录下来,后边使用kubeadm join往集群中添加节点时会用到

配置节点1

如下操作在master节点操作

[root@kub-k8s-master ~]# rm -rf $HOME/.kube

[root@kub-k8s-master ~]# mkdir -p $HOME/.kube

[root@kub-k8s-master ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config查看node节点

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 5h5m v1.22.07.配置使用网络插件【master做】

执行

# curl -L https://docs.projectcalico.org/v3.22/manifests/calico.yaml -O

# kubectl apply -f calico.yaml

# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-6d9cdcd744-8jt5g 1/1 Running 0 6m50s

kube-system calico-node-rkz4s 1/1 Running 0 6m50s

kube-system coredns-74ff55c5b-bcfzg 1/1 Running 0 52m

kube-system coredns-74ff55c5b-qxl6z 1/1 Running 0 52m

kube-system etcd-kub-k8s-master 1/1 Running 0 53m

kube-system kube-apiserver-kub-k8s-master 1/1 Running 0 53m

kube-system kube-controller-manager-kub-k8s-master 1/1 Running 0 53m

kube-system kube-proxy-gfhkf 1/1 Running 0 52m

kube-system kube-scheduler-kub-k8s-master 1/1 Running 0 53m8.node节点加入集群【node做】

在所有node节点操作,此命令为初始化master成功后返回的结果

# kubeadm join 192.168.231.134:6443 --token 93erio.hbn2ti6z50he0lqs \

--discovery-token-ca-cert-hash sha256:3bc60f06a19bd09f38f3e05e5cff4299011b7110ca3281796668f4edb29a56d99.后续检查【master做】

查看pods:

[root@k8s-master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-7c87c5f9b8-cbh9g 1/1 Running 2 (31m ago) 5h5m

calico-node-7m5tq 1/1 Running 1 (31m ago) 5h5m

calico-node-bth4k 1/1 Running 1 (31m ago) 5h5m

calico-node-zp4tx 1/1 Running 1 (31m ago) 5h5m

coredns-78fcd69978-ff6bj 1/1 Running 1 (31m ago) 5h9m

coredns-78fcd69978-n4x55 1/1 Running 1 (31m ago) 5h9m

etcd-k8s-master 1/1 Running 1 (31m ago) 5h9m

kube-apiserver-k8s-master 1/1 Running 1 (31m ago) 5h9m

kube-controller-manager-k8s-master 1/1 Running 1 (31m ago) 5h9m

kube-proxy-bmv9m 1/1 Running 1 (31m ago) 5h9m

kube-proxy-k7zrz 1/1 Running 1 (31m ago) 5h6m

kube-proxy-vcg62 1/1 Running 1 (31m ago) 5h6m

kube-scheduler-k8s-master 1/1 Running 1 (31m ago) 5h9m查看节点

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 5h10m v1.22.0

k8s-node1 Ready <none> 5h6m v1.22.0

k8s-node2 Ready <none> 5h6m v1.22.0到此集群配置完成

错误改正

#> 如果集群初始化失败:(每个节点都要执行)

$ kubeadm reset -f; ipvsadm --clear; rm -rf ~/.kube

$ systemctl restart kubelet

#> 如果忘记token值

$ kubeadm token create --print-join-command

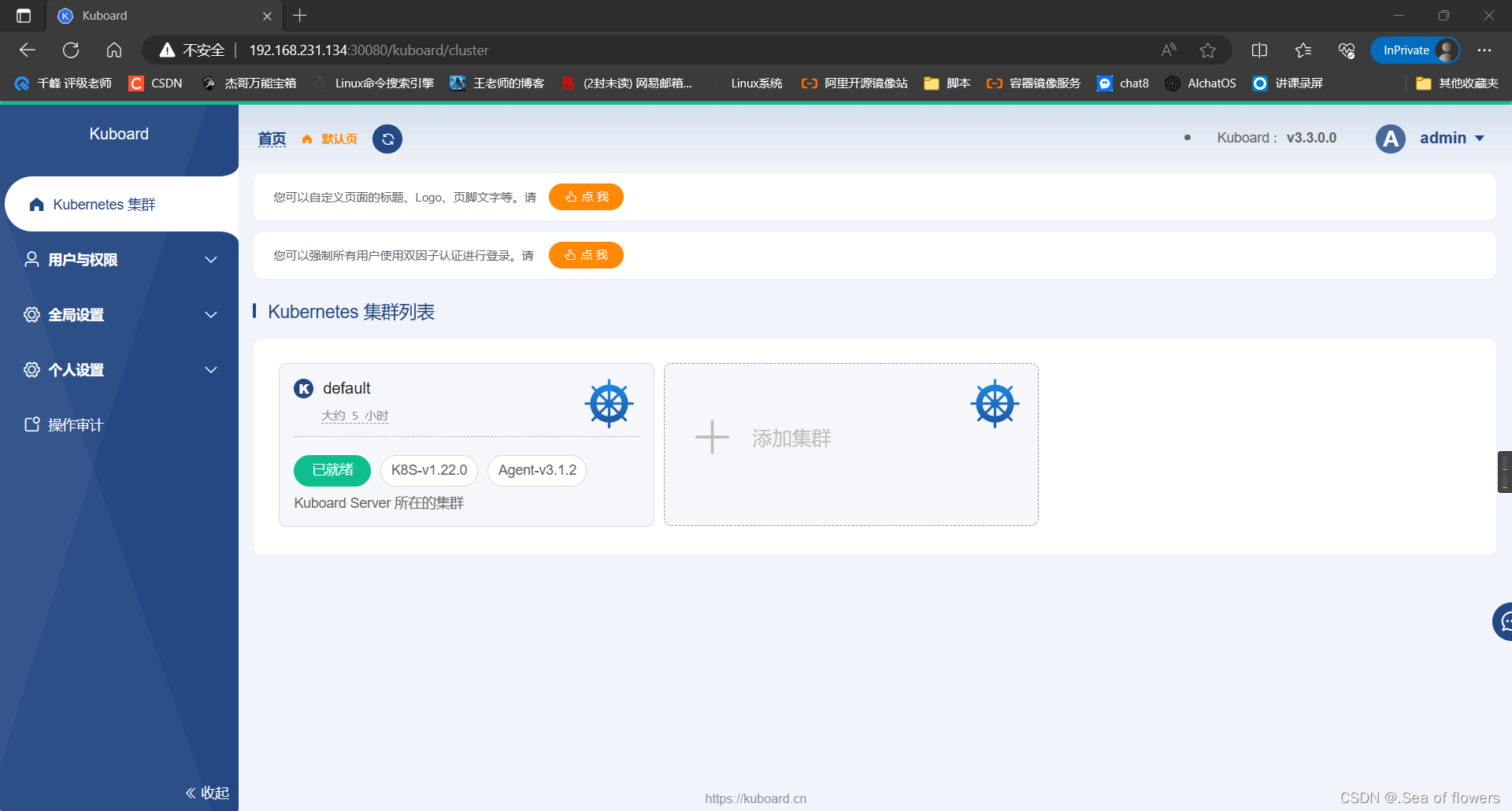

$ kubeadm init phase upload-certs --upload-certs可视化部署---kuboard

[root@kube-master ~]# kubectl apply -f https://addons.kuboard.cn/kuboard/kuboard-v3.yaml

输出这些信息

namespace/kuboard created

configmap/kuboard-v3-config created

serviceaccount/kuboard-boostrap created

clusterrolebinding.rbac.authorization.k8s.io/kuboard-boostrap-crb created

daemonset.apps/kuboard-etcd created

deployment.apps/kuboard-v3 created

service/kuboard-v3 created查看kuboard的pod是否启动

[root@kube-master ~]# kubectl get pod -n kuboard

NAME READY STATUS RESTARTS AGE

kuboard-agent-2-5c54dcb98f-4vqvc 1/1 Running 24 (7m50s ago) 16d

kuboard-agent-747b97fdb7-j42wr 1/1 Running 24 (7m34s ago) 16d

kuboard-etcd-ccdxk 1/1 Running 16 (8m58s ago) 16d

kuboard-etcd-k586q 1/1 Running 16 (8m53s ago) 16d

kuboard-questdb-bd65d6b96-rgx4x 1/1 Running 10 (8m53s ago) 16d

kuboard-v3-5fc46b5557-zwnsf 1/1 Running 12 (8m53s ago) 16d该过程可能需要一些时间,需耐心等待

查看service对象,30080端口

[root@k8s-master ~]# kubectl get svc -n kuboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kuboard-v3 NodePort 10.101.117.230 <none> 80:30080/TCP,10081:30081/TCP,10081:30081/UDP 134mweb端访问 master端的IP

http://192.168.231.134:30080

用户:admin

密码:Kuboard123

文章来源:https://blog.csdn.net/m0_59933574/article/details/134936188

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!