【音视频 ffmpeg 】直播推流QT框架搭建

2023-12-30 04:59:55

3个线程

一个做视频解码一个做音频解码一个做复用推流

视频解码线程展示

#include "videodecodethread.h"

VideodecodeThread::VideodecodeThread(QObject *parent)

:QThread(parent)

{

avdevice_register_all();

avformat_network_init();

}

void VideodecodeThread::run()

{

fmt = av_find_input_format("dshow");

av_dict_set(&options, "video_size", "640*480", 0);

av_dict_set(&options, "framerate", "30", 0);

ret = avformat_open_input(&pFormatCtx, "video=ov9734_azurewave_camera", fmt, &options);

if (ret < 0)

{

qDebug() << "Couldn't open input stream." << ret;

return;

}

ret = avformat_find_stream_info(pFormatCtx, &options);

if(ret < 0)

{

qDebug()<< "Couldn't find stream information.";

return;

}

videoIndex = av_find_best_stream(pFormatCtx, AVMEDIA_TYPE_VIDEO, -1, -1, &pAvCodec, 0);

if(videoIndex < 0)

{

qDebug()<< "Couldn't av_find_best_stream.";

return;

}

pAvCodec = avcodec_find_decoder(pFormatCtx->streams[videoIndex]->codecpar->codec_id);

if(!pAvCodec)

{

qDebug()<< "Couldn't avcodec_find_decoder.";

return;

}

qDebug()<<"avcodec_open2 pAVCodec->name:" << QString::fromStdString(pAvCodec->name);

if(pFormatCtx->streams[videoIndex]->avg_frame_rate.den != 0)

{

float fps_ = pFormatCtx->streams[videoIndex]->avg_frame_rate.num / pFormatCtx->streams[videoIndex]->avg_frame_rate.den;

qDebug() <<"fps:" << fps_;

}

int64_t video_length_sec_ = pFormatCtx->duration/AV_TIME_BASE;

qDebug() <<"video_length_sec_:" << video_length_sec_;

pAvCodecCtx = avcodec_alloc_context3(pAvCodec);

if(!pAvCodecCtx)

{

qDebug()<< "Couldn't avcodec_alloc_context3.";

return;

}

ret = avcodec_parameters_to_context(pAvCodecCtx, pFormatCtx->streams[videoIndex]->codecpar);

if(ret < 0)

{

qDebug()<< "Couldn't avcodec_parameters_to_context.";

return;

}

pAvFrame = av_frame_alloc();

pAvFrameYUV = av_frame_alloc();

// pSwsCtx = sws_getContext(pAvCodecCtx->width, pAvCodecCtx->height, pAvCodecCtx->pix_fmt,

// pAvCodecCtx->width, pAvCodecCtx->height, AV_PIX_FMT_YUV420P9,

// SWS_BICUBIC, NULL, NULL, NULL);

//m_size = av_image_get_buffer_size(AVPixelFormat(AV_PIX_FMT_YUV420P9), pAvCodecCtx->width, pAvCodecCtx->height, 1);

//为已经分配的空间的结构体AVPicture挂上一段用于保存数据的空间

//av_image_fill_arrays(pAvFrameYUV->data, pAvFrameYUV->linesize, buffer, AV_PIX_FMT_BGR32, pAvCodecCtx->width, pAvCodecCtx->height, 1);

av_new_packet(packet, pAvCodecCtx->width * pAvCodecCtx->height);

while(run_flag && !av_read_frame(pFormatCtx, packet))

{

if (packet->stream_index == videoIndex)

{

//解码一帧视频数据

int iGotPic = avcodec_send_packet(pAvCodecCtx, packet);

if(iGotPic != 0)

{

qDebug()<<"iVideoIndex avcodec_send_packet error";

continue;

}

iGotPic = avcodec_receive_frame(pAvCodecCtx, pAvFrame);

if(iGotPic == 0){

//转换像素

// sws_scale(pSwsCtx, (uint8_t const * const *)pAvFrame->data, pAvFrame->linesize, 0,

// pAvFrame->height, pAvFrameYUV->data, pAvFrameYUV->linesize);

//buffer = (uint8_t*)av_malloc(pAvFrame->height * pAvFrame->linesize[0]);

byte = QByteArray((char*)pAvFrame->data);

videoQueue.push(byte);

videoCount++;

}

}

av_packet_unref(packet);

}

}

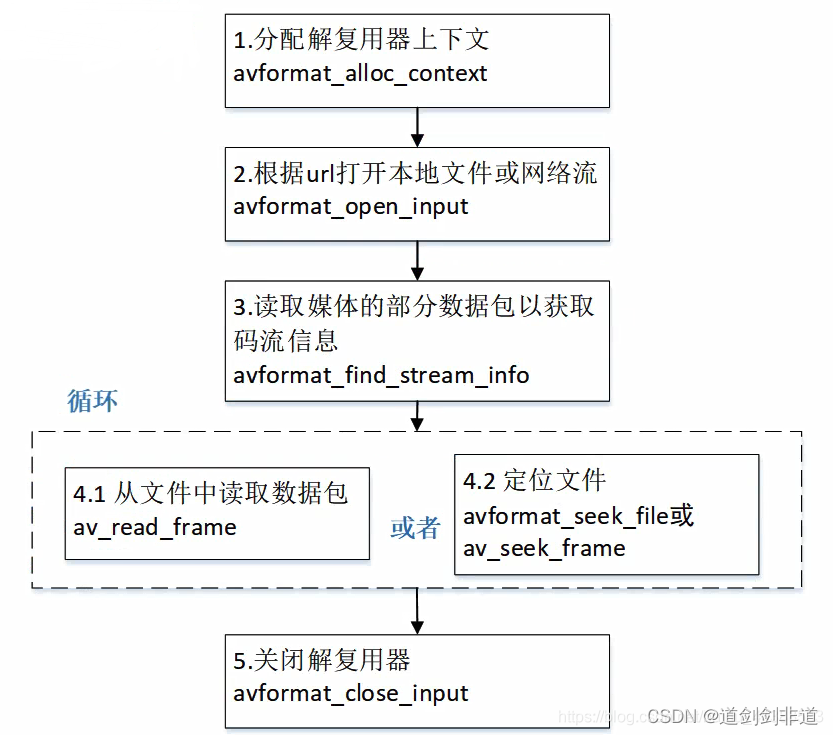

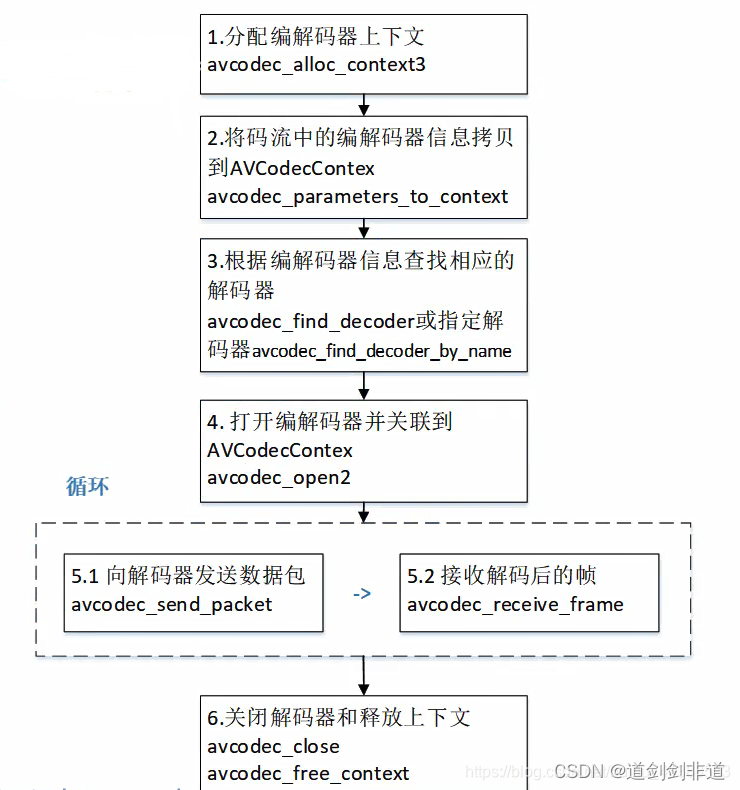

ffmpeg 函数解读

后面加上音频以及复用推流过程

文章来源:https://blog.csdn.net/weixin_45397344/article/details/135226768

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:veading@qq.com进行投诉反馈,一经查实,立即删除!